Lesson 1: The Model Landscape in Depth

Listen to this lesson

Lesson 1: The Model Landscape in Depth

Reading time: 10 minutes

Introduction

If you're making AI decisions for a business, "which model should we use?" is one of the first questions you'll face — and one of the most consequential. The wrong choice doesn't just waste money; it shapes what your team can build, how fast they can move, and how locked in you become.

This lesson maps the current landscape in detail. We'll cover the major model families, their tiers, their genuine strengths, and where marketing claims diverge from reality.

The Closed-Source Leaders

Anthropic's Claude Family

Claude comes in three tiers, each designed for different use cases:

Claude Haiku — The lightweight option. Fast, cheap, good enough for straightforward tasks like classification, simple summarisation, and routing. Think of it as your workhorse for high-volume, lower-complexity work. Response times are typically under a second.

Claude Sonnet — The mid-tier that many businesses end up using most. Strong at writing, analysis, coding, and following complex instructions. Balances capability with cost effectively. For most business applications, Sonnet handles the job well.

Claude Opus — The premium tier. Stronger reasoning, better at nuanced tasks, more reliable on complex multi-step problems. The cost difference is significant, so the question is always whether your task actually needs Opus-level capability. Often, Sonnet is sufficient.

Where Claude genuinely excels: Long-form content, careful analysis, following detailed instructions, coding, and tasks where you need the model to be cautious rather than confidently wrong. Claude tends to acknowledge uncertainty more readily than competitors. Its context window is generous — up to 200K tokens (with 1M in beta for Opus and Sonnet) — making it strong for working with long documents. At the time of writing (early 2026), the current generation includes Claude Opus 4.6 ($5/MTok input, $25/MTok output), Claude Sonnet 4.6 ($3/MTok input, $15/MTok output), and Claude Haiku 4.5 ($1/MTok input, $5/MTok output).

OpenAI's GPT Family

OpenAI offers a broader product range, which can be both an advantage and a source of confusion:

GPT-4o — The flagship general-purpose model. Multimodal (handles text, images, and audio). Fast, capable, and the default for most ChatGPT interactions. Good all-rounder for business applications.

GPT-o1 and o3 — The "reasoning" models. These take longer to respond because they work through problems step by step internally before answering. Significantly better at maths, logic, science, and complex analytical tasks. The tradeoff is speed and cost — these models are slower and more expensive per query.

GPT-4o mini — The budget option. Surprisingly capable for its price point. Good for tasks where you need volume and speed matters more than peak performance.

Where OpenAI genuinely excels: Breadth of ecosystem. ChatGPT's plugins, Code Interpreter, image generation (DALL-E), and voice capabilities make it the most feature-complete consumer platform. The API is mature and well-documented. For businesses already in the Microsoft ecosystem, the Azure OpenAI integration is a significant advantage.

Google's Gemini Family

Gemini Flash — Google's speed-optimised model. Extremely fast, very affordable, solid for straightforward tasks. One of the best options for high-volume processing where cost per query matters.

Gemini Pro — The capable middle tier. Competitive with GPT-4o and Claude Sonnet on most benchmarks. Strong multimodal capabilities, particularly with image and video understanding.

Gemini Ultra — Google's premium offering. Positioned against GPT-4o and Claude Opus. Strong on reasoning and multimodal tasks.

Where Gemini genuinely excels: Google ecosystem integration (Workspace, Search, Cloud). Multimodal capabilities, particularly video understanding. Gemini Flash's price-to-performance ratio is genuinely impressive for high-volume use cases. The massive context window (up to 1 million tokens on some versions) is unmatched for processing very large documents.

The Open-Source Contenders

Open-source models have become genuinely competitive. For businesses, they offer something the closed-source providers can't: complete control.

Meta's Llama

Meta's Llama models (currently Llama 3) are arguably the most significant open-source AI models available. They're free to use (with some licensing conditions for very large-scale commercial use), and they're competitive with mid-tier closed-source models.

Why it matters for business: You can run Llama on your own infrastructure. Your data never leaves your servers. You can fine-tune it for your specific use case. For businesses in regulated industries or with strict data sovereignty requirements, this can be the deciding factor.

Mistral

A French AI company producing high-quality open models. Their models tend to be efficient — strong performance relative to their size. Mistral is particularly popular in Europe, partly due to data sovereignty considerations.

Why it matters for business: Mistral offers both open-source and commercial API options. Their models are often smaller and faster than equivalents, making them cost-effective to deploy. The European origin can matter for businesses with EU data residency requirements.

DeepSeek

A Chinese AI lab that has produced remarkably capable models, particularly for coding and reasoning tasks. DeepSeek's models have surprised the industry with their performance relative to their training cost.

Why it matters for business: DeepSeek demonstrates that the model landscape isn't limited to US companies. Their models are genuinely competitive. However, for some businesses, the Chinese origin raises data handling concerns that need to be evaluated against your specific risk tolerance and regulatory requirements.

Q1 2026 Emerging Open-Source Models

Q1 2026 saw the release of several noteworthy open-source models:

- Qwen 2.5 Max (Alibaba): Competitive with GPT-4o on many benchmarks, particularly strong in multilingual tasks including Te Reo Māori.

- DeepSeek-V3: Significant improvement over V2, with better reasoning and coding capabilities at lower inference cost.

- Stable LM 2 (Stability AI): Focuses on efficiency and safety, with strong performance in instruction following.

These models expand the options for businesses seeking specific capabilities or regional optimisations.

How to Read This Landscape

A few honest observations:

The gaps are narrowing. Two years ago, there was a clear leader. Today, the top models from each provider are remarkably close in general capability. The differences are real but often subtle — and they shift with every release.

Tiers matter more than brands. A premium model from any major provider will outperform a budget model from any other. The most important choice is often which tier you need, not which company.

The open-source floor is rising. Tasks that required GPT-4 a year ago can often be handled by open-source models today. This trend will continue.

Multimodal is becoming standard. The ability to process images, audio, and video alongside text is no longer a differentiator — it's a baseline expectation.

Key Takeaways

- The three closed-source leaders (Anthropic, OpenAI, Google) each offer tiered models from budget to premium. Know the tiers, not just the brands.

- Open-source models (Llama, Mistral, DeepSeek) are genuinely competitive and offer complete data control — critical for regulated industries.

- Capability gaps between providers are narrowing. Choosing a tier matters more than choosing a brand for most use cases.

- Each provider has genuine strengths: Claude for careful analysis and writing, OpenAI for ecosystem breadth, Gemini for Google integration and massive context windows, open-source for control and customisation.

Exercise: Map Your Requirements to the Landscape

Create a simple table with three columns: Business Need, Priority (high/medium/low), and Best Fit.

List your top five AI use cases (e.g., customer support summarisation, code review, report generation, data analysis, content creation). For each, identify what matters most (speed, accuracy, cost, data privacy, integration) and map it to the model family that best fits.

Don't choose one model for everything. The goal is to see where different models serve different needs.

Quiz

1. A business needs to process 50,000 customer support tickets daily for sentiment classification. Which model characteristic should they prioritise?

a) The highest reasoning capability available

b) Speed and cost per query, since the task is straightforward and high-volume

c) The largest context window possible

d) Multimodal capabilities for processing images

Answer: b) Sentiment classification is a well-defined, relatively simple task. At 50,000 queries per day, speed and cost dominate the decision. Models like Gemini Flash, GPT-4o mini, or Claude Haiku are designed for exactly this type of high-volume, lower-complexity work.

2. What is the primary advantage of open-source models like Llama for businesses in regulated industries?

a) They always outperform closed-source models on benchmarks

b) They are free, eliminating all AI costs

c) They can be run on your own infrastructure, keeping data under your control

d) They come with enterprise support contracts included

Answer: c) The key advantage is data sovereignty. Running models on your own infrastructure means sensitive data never leaves your environment. This can be essential for compliance with regulations like GDPR or industry-specific data handling requirements. Open-source models still have hosting and operational costs.

3. Why is choosing a model tier often more important than choosing a model brand?

a) All brands are identical, so tier is the only real variable

b) Premium models from any major provider tend to outperform budget models from any other, and capability gaps between same-tier models are relatively small

c) Tier determines the context window size, which is the most important factor

d) Lower tiers are always better because they're faster

Answer: b) The performance differences between providers at the same tier are often subtle and shift with each release. The difference between tiers (e.g., budget vs premium) is consistently larger. Matching the right tier to your task complexity matters more than brand loyalty.

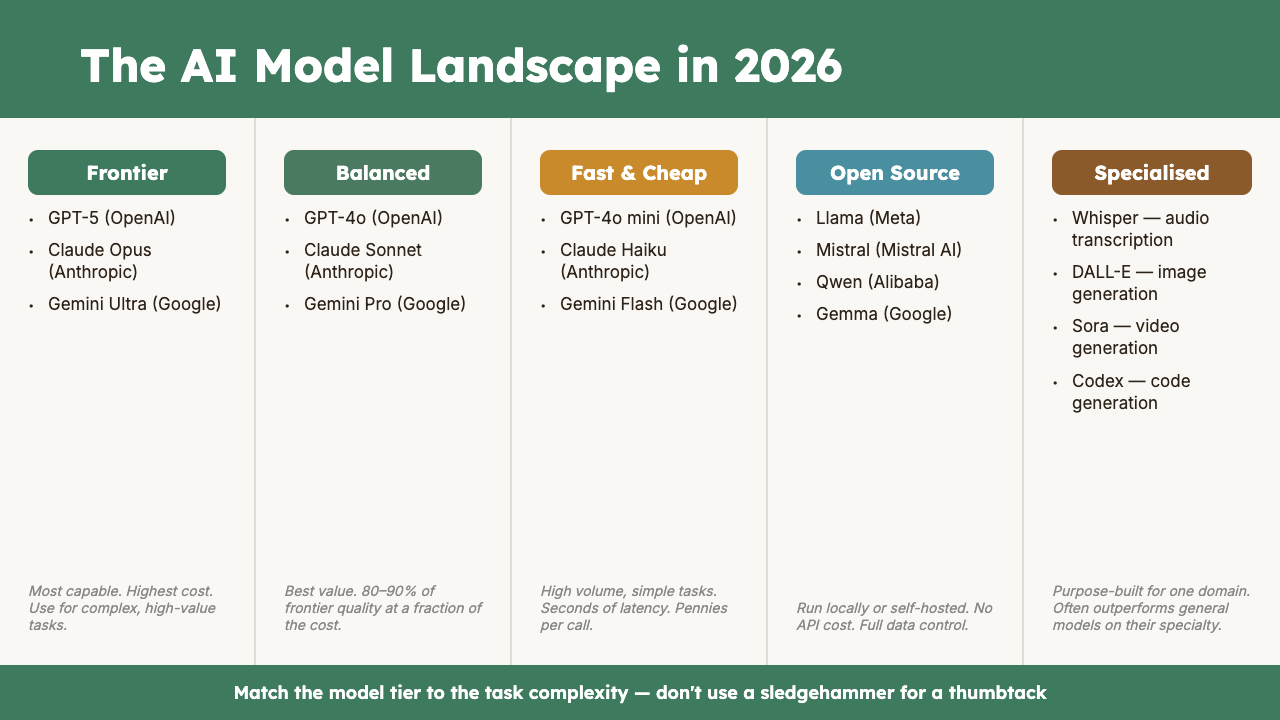

Visual overview