Lesson 1: What AI Governance Actually Means

Listen to this lesson

Lesson 1: What AI Governance Actually Means

Course: AI Governance & Policy Frameworks Pathway: Governance (Paid) Level: Advanced Estimated Reading Time: 8 minutes

Beyond Buzzwords to Practical Frameworks

"AI governance" has become one of those phrases that gets dropped into board papers, strategy documents, and conference agendas without anyone pausing to define what it actually means. If you've sat in a meeting where someone said "we need AI governance" and everyone nodded while privately wondering what that looks like in practice — you're not alone.

Let's fix that.

Governance Is Not a Document

The most common mistake organisations make is treating AI governance as a policy document. Someone drafts a ten-page PDF, it gets signed off at the executive level, filed on SharePoint, and everyone moves on. That's not governance. That's paperwork.

AI governance is a system of decision-making structures, processes, and accountability mechanisms that guide how an organisation develops, deploys, and monitors artificial intelligence. It's less like a policy and more like a nervous system — something that touches every part of the organisation where AI operates.

Think of it this way: corporate governance doesn't mean you have a Companies Act sitting on a shelf. It means you have a board, audit committees, reporting structures, and clear lines of accountability. AI governance works the same way.

The Three Pillars

Practical AI governance rests on three pillars. Every credible framework — whether from NIST, the EU, or ISO — maps back to these:

1. Oversight: Who decides what?

Someone needs to be responsible for approving AI use cases, setting boundaries on acceptable applications, and escalating concerns. In most organisations, this means establishing an AI governance committee or embedding AI oversight into existing risk and technology governance structures.

This isn't about creating bureaucracy. It's about being deliberate. When a team wants to deploy a large language model to handle customer complaints, someone with authority needs to ask: What data does it use? What happens when it gets it wrong? Who is accountable?

2. Process: How do decisions get made?

Governance without process is just good intentions. You need defined workflows for:

- AI risk assessment — before deployment, not after

- Approval gates — who signs off on what level of AI risk

- Monitoring and review — how you track AI systems once they're live

- Incident response — what happens when an AI system causes harm

The NIST AI Risk Management Framework (AI RMF) is arguably the most practical reference here. It breaks governance into four functions: Govern, Map, Measure, and Manage. The "Govern" function is explicitly about establishing the structures, roles, and policies that make everything else possible.

3. Accountability: Who answers when things go wrong?

This is where most governance frameworks get tested. It's easy to have oversight and process when everything works. The real question is: when an AI system makes a biased hiring decision, exposes private data, or produces a dangerous recommendation — who is responsible?

Accountability in AI governance means:

- Clear ownership of each AI system (not "the IT team" — a named role)

- Documented decision-making trails (why was this model chosen? Who approved the training data?)

- Defined consequences and remediation paths

Real Frameworks You Should Know

If you're building AI governance for your organisation, you don't need to start from scratch. Several credible frameworks exist:

NIST AI Risk Management Framework (AI RMF 1.0) Developed by the US National Institute of Standards and Technology, this is the most comprehensive, practical governance framework available. It's voluntary, flexible, and designed for organisations of any size. Its four core functions (Govern, Map, Measure, Manage) give you a clear structure to work with.

ISO/IEC 42001:2023 The first international standard for AI management systems. If your organisation already works with ISO standards (like ISO 27001 for information security), this will feel familiar. It provides a certifiable management system for responsible AI development and use.

EU AI Act The world's first comprehensive AI legislation. While it's European law, it matters globally because any organisation offering AI products or services in the EU must comply. It introduces a risk-based classification system (unacceptable, high, limited, minimal risk) that's influencing governance thinking worldwide.

New Zealand Algorithm Charter Aotearoa's own contribution — a voluntary commitment by government agencies to use algorithms in a transparent, accountable, and fair way. It's not legislation, and it only applies to government, but its principles are sound reference points for any New Zealand organisation.

Why This Matters Now

Three forces are converging that make AI governance urgent rather than aspirational:

-

Regulatory momentum. The EU AI Act entered into force on August 1, 2024, and will be fully applicable from August 2, 2026. Organisations offering AI products or services in the EU must already comply with rules covering prohibited AI practices, general-purpose AI model requirements, transparency obligations, and face significant penalties for non-compliance. Australia released its mandatory guardrails framework in 2025. New Zealand published its national AI Strategy in July 2025, setting out the government's vision for AI adoption, innovation, and responsible use.

-

International case studies show divergent approaches. China's embrace of autonomous AI agents like OpenClaw has created long installation queues in cities like Shenzhen, highlighting both intense demand and growing regulatory anxiety about AI autonomy. Contrast this with New Zealand's more cautious approach through the Algorithm Charter — different cultures, same challenge: how to govern intelligence that can make decisions on its own.

-

Liability exposure. As AI systems make more consequential decisions (lending, hiring, clinical recommendations), the legal and reputational risk of ungoverned AI grows exponentially.

-

Operational scale. Most organisations have moved past the "experimenting with ChatGPT" phase. When you have dozens of AI tools embedded across departments, governance becomes an operational necessity, not a strategic nice-to-have.

Governance Is Not Anti-Innovation

One more thing worth saying clearly: good governance doesn't slow innovation down. It speeds it up — safely.

Without governance, every AI project becomes a one-off negotiation. Teams waste time seeking ad hoc approvals, legal gets involved too late, and promising initiatives stall because nobody knows how to assess the risk.

With governance, teams know the rules. They understand what data they can use, what risk thresholds apply, and who to talk to. Decisions happen faster because the framework already exists.

The organisations that move fastest with AI won't be the ones with no rules. They'll be the ones with clear, proportionate rules that everyone understands.

Key Takeaways

- AI governance is a system of oversight, process, and accountability — not a single document or policy.

- The NIST AI RMF, ISO/IEC 42001, EU AI Act, and NZ Algorithm Charter are the key frameworks to understand.

- Accountability means named roles with clear ownership of AI systems and their outcomes.

- Good governance accelerates innovation by providing clarity, not bureaucracy.

- Regulatory, legal, and operational pressures are making AI governance urgent for every organisation.

Practical Exercise

Governance Audit Snapshot

Take 30 minutes and answer these five questions about your organisation:

- Is there a named individual or committee responsible for AI oversight?

- Is there a defined process for approving new AI use cases before deployment?

- Can you identify who is accountable for each AI system currently in use?

- Is there a documented process for what happens when an AI system produces a harmful or incorrect outcome?

- Has your organisation referenced any external AI governance framework (NIST, ISO, EU AI Act, or equivalent)?

Score yourself: each "yes" = 1 point. If you scored 3 or below, your organisation has governance gaps that need attention. Bring this snapshot to your next leadership meeting as a conversation starter.

Quiz

Question 1: What are the three pillars of practical AI governance described in this lesson?

A) Policy, compliance, and audit B) Oversight, process, and accountability C) Risk, regulation, and reporting D) Strategy, implementation, and review

Correct Answer: B — Oversight (who decides), process (how decisions get made), and accountability (who answers when things go wrong) form the foundation of practical AI governance.

Question 2: What are the four core functions of the NIST AI Risk Management Framework?

A) Plan, Do, Check, Act B) Identify, Protect, Detect, Respond C) Govern, Map, Measure, Manage D) Assess, Mitigate, Monitor, Report

Correct Answer: C — The NIST AI RMF is structured around Govern, Map, Measure, and Manage, with Govern providing the foundational structures for the other three.

Question 3: Why does the lesson argue that AI governance accelerates rather than hinders innovation?

A) Because governance removes the need for legal review B) Because governance provides clear rules so teams don't waste time on ad hoc approvals C) Because governance eliminates all AI risk D) Because governance is only applied to low-risk AI systems

Correct Answer: B — When governance frameworks are clear and proportionate, teams understand the rules, risk thresholds, and approval pathways, which means decisions happen faster and projects don't stall.

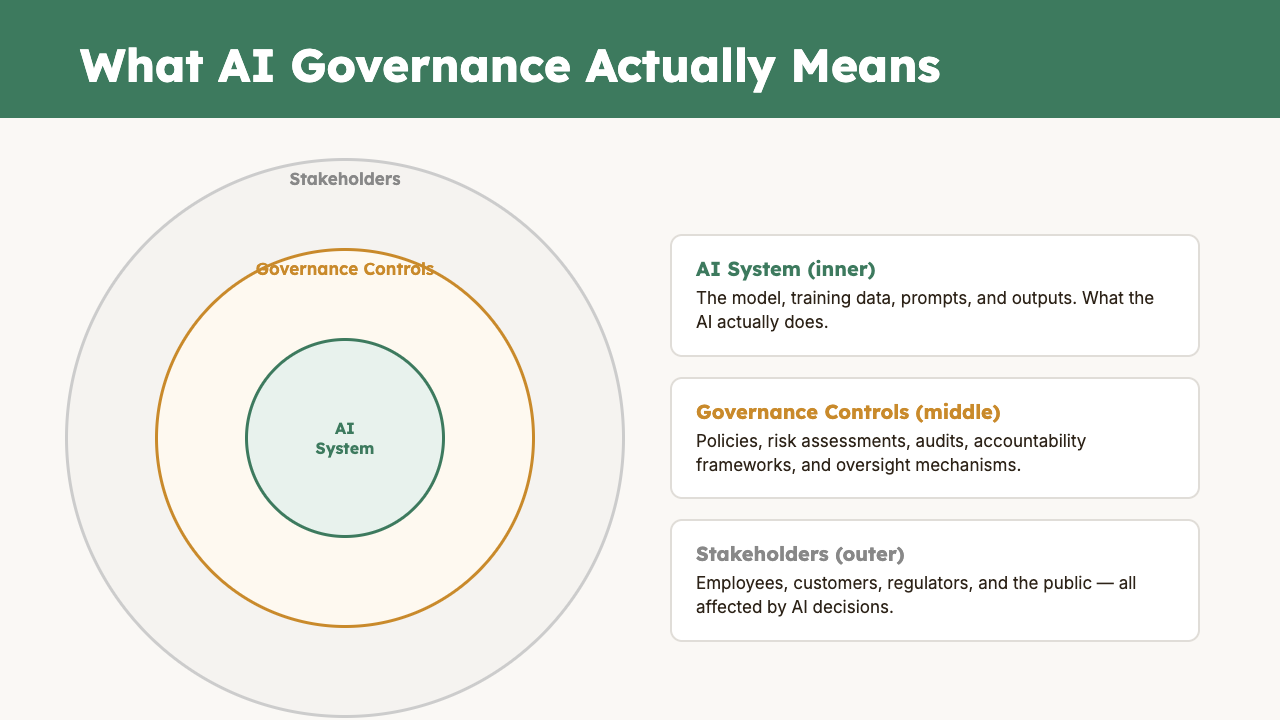

Visual overview