Voice Cloning and Deepfake Scams — When You Can't Trust Your Ears

Listen to this lesson

Imagine getting a phone call from your son. His voice. His way of speaking. He sounds panicked. He says he has been in an accident, or arrested, or is stuck in a hospital overseas. He needs money urgently — and he begs you not to tell the rest of the family yet.

You would probably act immediately. Any loving parent would.

This is exactly what scammers are counting on. And with AI voice cloning, they no longer need to impersonate your son — they can sound exactly like him.

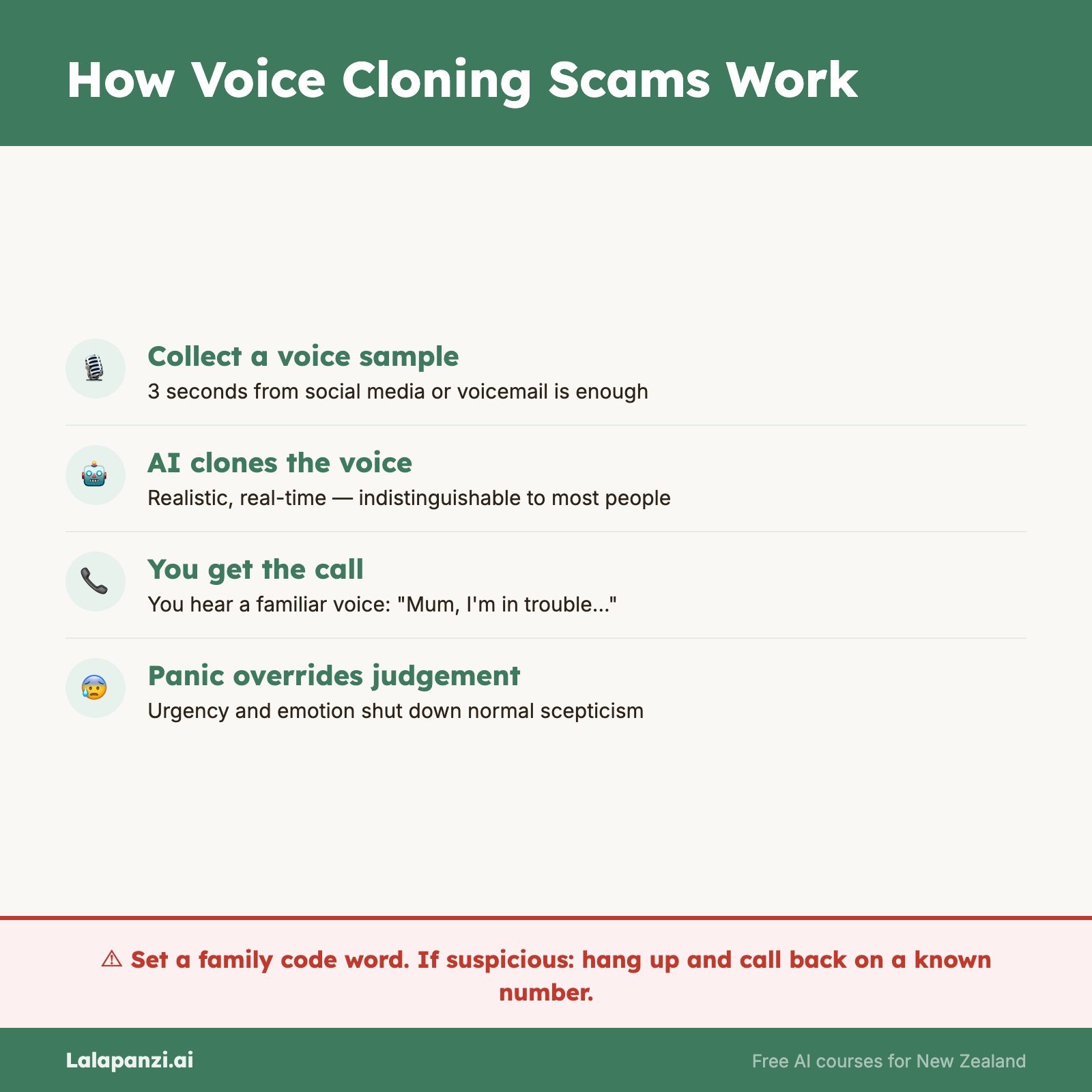

How voice cloning works

AI voice cloning tools can replicate a person's voice from just a few seconds of audio. That audio might come from a TikTok video, a YouTube clip, a voicemail, a podcast, or a social media post. The person does not need to have recorded anything long or formal — a single short video is often enough.

Once the AI has a voice sample, it can generate new speech in that voice saying anything the scammer types. The result is a phone call that sounds — to most ears, including family members — convincingly like the real person.

This technology is not experimental. It is available right now, for little or no cost, to anyone who wants to use it.

The grandparent and family emergency scam

The most common use of voice cloning targets older adults. A grandparent or parent receives a call from someone who sounds exactly like their grandchild or child. The "grandchild" says they are in trouble — arrested, hospitalised, or stranded — and needs money right away. They plead for secrecy: please do not tell Mum and Dad yet.

Then a second caller gets on the line, posing as a lawyer, police officer, or hospital administrator. They explain how the money needs to be sent — usually by gift card, wire transfer, or cryptocurrency.

This scam works because it exploits the strongest instinct most people have: protecting the people they love.

Deepfake video calls and fake endorsements

Voice cloning is not the only tool. Scammers also use deepfake video — AI-generated footage that shows a real person's face and voice saying things they never said.

In New Zealand, IRD issued an official warning in March 2026 after scammers used an AI-generated image of the Commissioner of Inland Revenue, Peter Mersi, in social media advertisements promoting a fake cryptocurrency tax webinar. The ads were removed by Meta, but reappeared the following day, slightly altered. This is a documented, live NZ case.

Deepfake video calls are also used in CEO fraud — scammers impersonate a company executive on a video call and instruct a staff member to make a large payment. The technology is not yet perfect, but it is convincing enough to fool people who are not looking for it.

Red flags

If you receive a call from a family member claiming to be in crisis, watch for these warning signs:

- The caller urgently asks you not to tell other family members

- They are asking for money right away via gift cards, wire transfer, or cryptocurrency

- A second caller — a "lawyer" or "official" — gets on the line to explain the payment

- The call comes from an unknown or overseas number

- Something about the voice feels slightly flat or unusual, even if it sounds familiar

What to do

- Set up a family code word. Agree on a word or phrase that only real family members know. If someone calls claiming to be your grandchild, ask for the code word. A scammer cannot guess it.

- Hang up and call back. End the call and ring the person directly on a number you already have saved. If they are fine, the call was a scam. If they really are in trouble, you will reach them — or their family.

- Never pay by gift card. No legitimate institution — police, hospitals, courts — will ask you to pay using gift cards or cryptocurrency. This is always a scam.

- Take your time. A real emergency will still be a real emergency in ten minutes. Scammers rely on panic. Slowing down costs you nothing.

- Talk to someone. Before sending any money, tell another family member what is happening.

If you have been targeted, contact Netsafe on 0508 638 723. They will not judge you — they will help.

Visual overview