Module 7, Lesson 2: The Privacy Act 2020 and AI — What You Need to Know

Listen to this lesson

Module 7, Lesson 2: The Privacy Act 2020 and AI — What You Need to Know

Estimated reading time: 8 minutes

What You'll Learn

New Zealand's Privacy Act 2020 is one of the most relevant pieces of legislation for anyone using AI in a professional context. It governs how personal information is collected, stored, used, and disclosed — and AI tools interact with all of these. This lesson breaks down what the Act says, how it applies to AI use, and what you need to do to stay on the right side of it.

The Privacy Act 2020: A Quick Overview

The Privacy Act 2020 replaced the Privacy Act 1993 and came into force on 1 December 2020. It applies to every organisation and person in New Zealand that handles personal information, regardless of size. There's no small business exemption — a sole trader has the same obligations as a government department.

The Act is built around 13 Information Privacy Principles (IPPs) that cover the full lifecycle of personal information. You don't need to memorise all 13, but you need to understand the ones that directly affect AI use.

The Information Privacy Principles That Matter Most for AI

IPP 1: Purpose of Collection

Personal information should only be collected for a lawful purpose connected to the organisation's function, and the collection should be necessary for that purpose.

AI implication: If you're collecting personal information from customers, clients, or employees, that information was collected for a specific purpose. Using it for a different purpose — such as feeding it into an AI tool for analysis, marketing insights, or training — may breach this principle unless the new use is directly related to the original purpose.

IPP 2: Source of Information

Where possible, personal information should be collected directly from the individual concerned.

AI implication: AI tools can scrape, aggregate, and infer personal information from multiple sources. If you're using AI to build profiles or gather information about individuals from sources other than the individuals themselves, you may be running afoul of this principle.

IPP 3: Collection of Information from Subject

When collecting personal information directly from someone, you must tell them: who is collecting it, why, who will receive it, and the consequences of not providing it.

AI implication: If you're going to feed someone's personal information into an AI tool, they should know about it. This doesn't necessarily mean you need explicit consent for every interaction, but people should be aware that AI tools may process their information and understand what that means.

IPP 5: Storage and Security

Personal information must be protected against loss, unauthorised access, use, modification, or disclosure.

AI implication: When you paste personal information into an AI tool, you're sharing it with a third-party service. Is that service adequately secure? Where is the data stored? Who can access it? These are questions you need to be able to answer. Using a free-tier AI tool with servers in the US to process New Zealand customer data raises legitimate security and storage questions.

IPP 6: Access to Personal Information

Individuals have the right to access their personal information held by an organisation.

AI implication: If an AI tool processes someone's personal information, can you retrieve and provide that information if they request it? Can you explain what the AI did with their data? This is more complex than it sounds, particularly if the AI provider doesn't offer detailed data retrieval mechanisms.

IPP 10: Limits on Use

Personal information should not be used for a purpose other than the one it was collected for, unless an exception applies (such as the individual's authorisation or where the information is publicly available).

AI implication: This is the big one for AI. If you collected someone's email address to send them invoices, you can't then use that email address (along with their purchase history) as input for an AI marketing analysis without a clear basis for doing so. The purpose must align with what the person reasonably expected when they provided their information.

IPP 11: Limits on Disclosure

Personal information should not be disclosed to other agencies or persons unless an exception applies.

AI implication: When you input personal information into an AI tool, you're disclosing it to the AI provider. That's a disclosure to another organisation. Unless an exception applies, this requires either the individual's authorisation or a clear connection to the original purpose of collection.

The Office of the Privacy Commissioner

The Office of the Privacy Commissioner (OPC) is the independent body responsible for enforcing the Privacy Act. They've been increasingly active on AI-related issues.

The Privacy Commissioner has made public statements about AI, emphasising that:

- The Privacy Act applies to AI use — there's no "technology exception"

- Organisations using AI to process personal information must comply with the IPPs

- Transparency with individuals is essential — people should know when AI is involved in decisions about them

- Privacy impact assessments are recommended for any significant AI implementation

The OPC has the power to investigate complaints, issue compliance notices, and — under the 2020 Act — there are now provisions for the Human Rights Review Tribunal to award damages for privacy breaches, including up to $350,000 for interference with privacy.

Mandatory Breach Notification

One of the significant additions in the Privacy Act 2020 is the mandatory breach notification requirement. If a privacy breach causes or is likely to cause serious harm, the organisation must notify both the Privacy Commissioner and the affected individuals.

AI implication: If an AI tool you're using experiences a data breach, and personal information you inputted is compromised, you may be obligated to report it. This is another reason to be very deliberate about what personal information you share with AI tools — and to choose providers with strong security track records.

Practical Compliance for AI Users

Here's what this all means in practice:

Before Using AI with Personal Information

-

Ask: do I need to use personal information at all? Often, you can get the same AI assistance using anonymised or aggregated data. If you need help drafting a response to a customer complaint, you don't need to include their real name and address.

-

Check the purpose. Is this AI use consistent with the purpose for which the information was originally collected? If a customer gave you their details to receive a service, using those details for AI-driven marketing analysis is a different purpose.

-

Consider transparency. Would the individuals whose information you're using be surprised or concerned to learn their data was being processed by an AI tool? If the answer is yes, that's a signal to reconsider.

-

Assess the provider. Where does the AI provider store data? What are their security practices? Do they use your inputs for model training? Choose providers whose practices align with your obligations under the Act.

For Organisations

-

Include AI in your privacy impact assessments. Any new AI implementation that involves personal information should be assessed for privacy risks.

-

Update your privacy statements. If you're using AI to process personal information, your privacy policy should reflect this. Vague statements about "using technology to improve our services" probably aren't sufficient.

-

Train your team. People need to understand that the Privacy Act applies to their use of AI tools, not just to traditional data handling. The person casually pasting customer records into ChatGPT may not realise they're potentially creating a compliance issue.

-

Document your decisions. If you decide to use AI in ways that involve personal information, document your reasoning — why it's necessary, what safeguards are in place, and how it aligns with the IPPs.

Looking Ahead

The Privacy Act 2020 wasn't written with generative AI specifically in mind, but its principles are broad enough to cover most AI-related privacy concerns. The Privacy Commissioner has signalled that guidance specific to AI will continue to develop. The NZ AI Strategy, published by the government in July 2025, reinforces the importance of the Privacy Act as a foundation for responsible AI use in Aotearoa, sitting alongside the Algorithm Charter as part of the broader framework for safe and trustworthy AI adoption.

For now, the safest approach is to apply the same privacy principles to AI that you would to any other handling of personal information. The technology is new; the obligations aren't.

Key Takeaways

- The Privacy Act 2020 applies fully to AI use — there's no technology exception. Every organisation in NZ that handles personal information must comply.

- Key principles for AI users: purpose limitation (IPP 10), disclosure limits (IPP 11), storage security (IPP 5), and transparency (IPP 3).

- Inputting personal information into an AI tool is a disclosure to another organisation and must be treated as such.

- Mandatory breach notification means you could be required to report breaches involving AI tools — another reason to be cautious about what you share.

- Anonymise data wherever possible and choose AI providers whose practices support your compliance obligations.

Practical Exercise

Privacy Act Compliance Check (20 minutes)

Scenario: You work for a mid-size NZ company that provides professional services. A manager wants to start using an AI tool to:

- Summarise client meeting notes (which contain client names and business details)

- Draft follow-up emails to clients

- Analyse client feedback forms to identify trends

For each of these three use cases:

- Identify which Information Privacy Principles are most relevant.

- What steps would you recommend to ensure compliance?

- What should the company tell its clients about this AI use?

- Write a brief (2-3 sentence) addition to the company's privacy statement that addresses AI use.

Knowledge Check

Question 1: Under the Privacy Act 2020, when you paste a customer's personal information into ChatGPT, this is best described as:

A) Internal use, because the AI is just a tool like a calculator B) A disclosure of personal information to another organisation (the AI provider) C) Not covered by the Privacy Act because AI is too new D) Perfectly fine as long as you delete the conversation afterwards

Answer: B — The AI provider is a separate organisation. Inputting personal information into their tool constitutes disclosure under the Act, and must comply with IPP 11 (limits on disclosure).

Question 2: A small accounting firm in Hamilton has five staff. Does the Privacy Act 2020 apply to their use of AI tools?

A) No — the Act only applies to organisations with more than 20 employees B) No — accounting firms are exempt from the Privacy Act C) Yes — the Act applies to every person and organisation in NZ that handles personal information, regardless of size D) Only if they're using AI tools that are hosted in New Zealand

Answer: C — The Privacy Act 2020 has no small business exemption. It applies to every person and organisation in New Zealand that collects, holds, or uses personal information.

Question 3: Your company experiences a data breach at an AI provider you've been using to process client information. Under the Privacy Act 2020, what are you potentially required to do?

A) Nothing — it's the AI provider's responsibility, not yours B) Notify the Privacy Commissioner and affected individuals if the breach is likely to cause serious harm C) Delete your account with the AI provider D) Pay an automatic fine to the Privacy Commissioner

Answer: B — The Privacy Act 2020 introduced mandatory breach notification. If a breach is likely to cause serious harm, the organisation must notify both the Privacy Commissioner and the affected individuals.

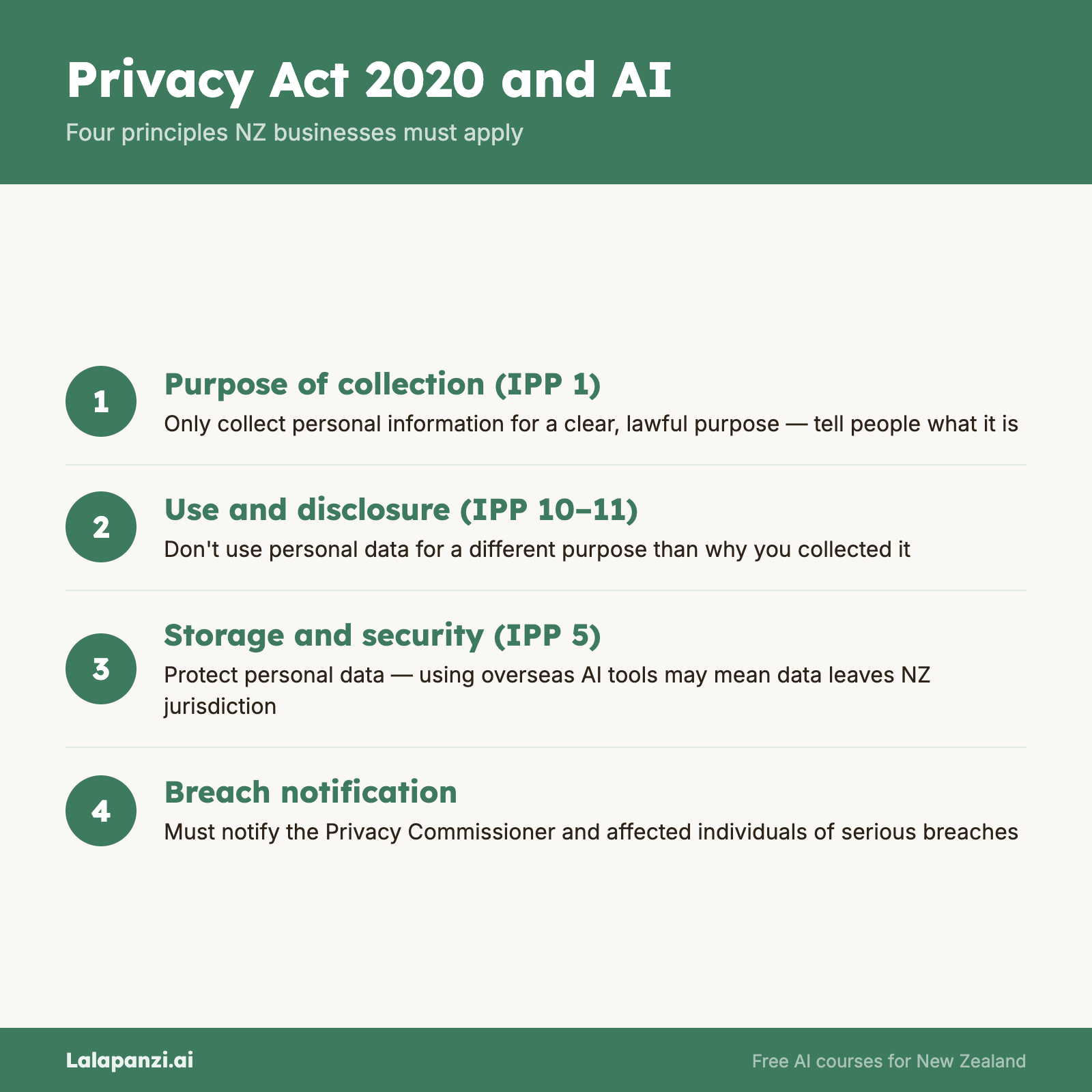

Visual overview