Module 6, Lesson 2: Bias in AI — Where It Comes From, How It Shows Up

Listen to this lesson

Module 6, Lesson 2: Bias in AI — Where It Comes From, How It Shows Up

Estimated reading time: 8 minutes

What You'll Learn

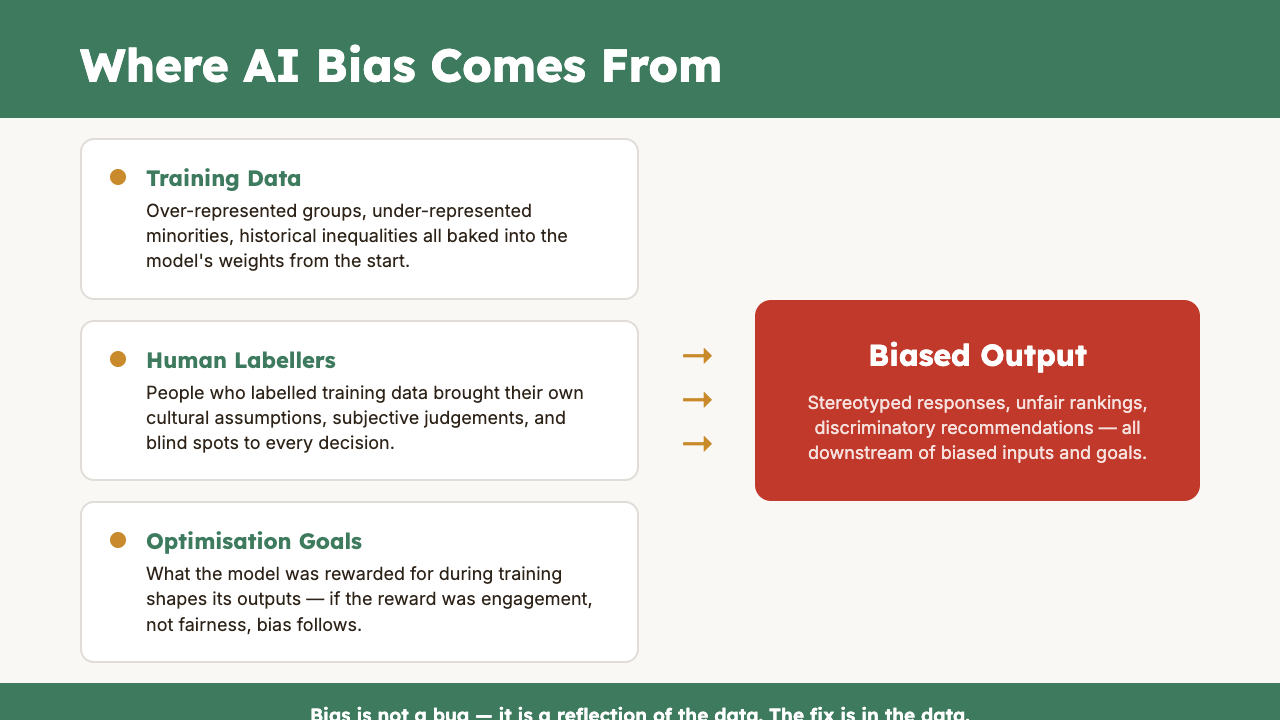

AI tools are not neutral. They reflect the data they were trained on, the choices their developers made, and the assumptions baked into their design. This isn't a conspiracy — it's a structural reality. Understanding bias in AI helps you use these tools more critically and catch problems before they cause harm.

What We Mean by "Bias"

In everyday language, bias means being unfairly prejudiced for or against something. In AI, bias has a broader meaning: it's any systematic pattern in the model's outputs that skews results in a particular direction.

Some bias is obvious. Some is subtle. And some is so deeply embedded that you won't notice it unless you're looking for it.

There are several types worth understanding:

Training data bias. AI models learn from enormous datasets — billions of web pages, books, articles, and conversations. If those datasets over-represent certain perspectives and under-represent others, the model will reflect that imbalance. English-language content dominates most training data, which means Western, English-speaking cultural perspectives are heavily over-represented. Perspectives from te ao Māori, Pacific communities, and many other cultures are significantly under-represented.

Selection bias. The people who write things on the internet are not a representative sample of humanity. They skew younger, more urban, more English-speaking, more male, and more from wealthy nations. When AI learns from this data, it absorbs those skews.

Historical bias. If you train an AI on historical data, it learns historical patterns — including historical discrimination. An AI trained on decades of hiring data will learn that certain roles were predominantly filled by certain demographics, and it may reproduce those patterns in its recommendations.

Confirmation bias in usage. This one's on us. When we use AI, we tend to accept outputs that confirm what we already believe and push back on outputs that challenge us. This means bias can be amplified not just by the model but by how we interact with it.

How Bias Shows Up in Practice

Let's look at real ways bias manifests in AI tools you might actually use:

Language and Cultural Assumptions

Ask an AI to "write a professional email" and you'll typically get something that reflects Anglo-American business culture — direct, structured, formal but friendly. That's one valid style, but it's not the only one. Different cultures have different norms around directness, formality, and relationship-building in professional communication.

Ask an AI to explain a concept and it will often default to examples from the United States. American cities, American companies, American cultural references. For those of us in Aotearoa, this means we regularly need to adjust or contextualise AI outputs for our own setting.

Image Generation

This is where bias becomes strikingly visible. Ask an image generator to create a picture of "a CEO" and you'll disproportionately get images of older white men in suits. Ask for "a nurse" and you'll get mostly women. These reflect historical patterns, but reproducing them uncritically reinforces stereotypes.

Some image generators have attempted to correct for this — sometimes overcorrecting in ways that produce historically inaccurate images. The point isn't that there's a simple fix. The point is that these tools carry assumptions about what people in certain roles look like.

Recruitment and HR

Several high-profile cases have demonstrated bias in AI recruitment tools. Amazon famously scrapped an AI hiring tool in 2018 after discovering it systematically downgraded CVs that included the word "women's" (as in "women's chess club captain") because it had been trained on a decade of hiring data that reflected existing gender imbalances in tech.

If your organisation uses AI to screen CVs, draft job descriptions, or rank candidates, bias is a real risk that needs active management.

Content and Recommendations

When AI tools summarise information, they make choices about what to include and what to leave out. Those choices aren't random — they reflect patterns in the training data. Mainstream perspectives tend to be amplified. Minority perspectives, dissenting views, and nuanced positions tend to be compressed or omitted.

Why This Matters

Bias in AI matters for three reasons:

1. Fairness. If AI tools systematically disadvantage certain groups of people — whether by race, gender, age, disability, cultural background, or any other characteristic — that's an equity issue, regardless of whether the bias was intentional.

2. Accuracy. Biased outputs are often inaccurate outputs. If an AI assumes your customer base looks like a US demographic profile when you're serving rural Southland, its recommendations will be wrong.

3. Trust. If people discover that AI tools used by your organisation produce biased results, trust erodes — both in the tools and in the organisation using them.

What You Can Do About It

You don't need to be an AI researcher to mitigate bias. Here are practical steps anyone can take:

1. Be Specific in Your Prompts

Vague prompts get default assumptions. Specific prompts get relevant results. Instead of "write a job description for a manager," try "write a job description for a team leader in a mid-size Christchurch manufacturing company, using inclusive language." The more context you provide, the less the AI falls back on its default patterns.

2. Question the Defaults

When an AI gives you a response, ask yourself: whose perspective is this reflecting? Is this relevant to my context? Are there viewpoints or considerations that are missing? This is especially important for anything involving people — hiring, customer communications, policy development, content creation.

3. Test for Bias Deliberately

If you're using AI for anything that affects people, test it. Run the same prompt with different names, genders, or cultural contexts and compare the results. If the outputs change significantly based on demographic details, that's bias you can see and account for.

4. Diversify Your Sources

Don't rely on a single AI tool as your only source of information or perspective. Use multiple tools, cross-reference with human expertise, and actively seek out perspectives that AI might under-represent.

5. Give Feedback

Most AI providers have feedback mechanisms. When you notice biased outputs, flag them. It doesn't fix the problem instantly, but it contributes to improvement over time.

A Note on Nuance

It's worth being honest about the complexity here. Some forms of bias are straightforward to identify and address. Others involve genuine tensions — for example, should an AI reflect the world as it is (including its inequalities) or the world as we think it should be? Reasonable people disagree on this.

The goal isn't to achieve perfectly unbiased AI — that may not be possible, and the definition of "unbiased" itself involves value judgements. The goal is to be aware of bias, think critically about it, and make informed choices about how you use AI outputs. That's a skill, and it's one worth developing.

Key Takeaways

- AI bias is structural, not intentional. It comes from training data, historical patterns, and the demographics of who creates internet content.

- Bias shows up in language, imagery, recommendations, and decision-support tools. It's not always obvious — you have to look for it.

- Specific prompts reduce bias. The more context and direction you give, the less the AI relies on default assumptions.

- Test for bias deliberately when using AI for anything that affects people — hiring, communications, policy, and content creation.

- Critical thinking is your best tool. No AI is unbiased. Your job is to recognise the skew and adjust accordingly.

Practical Exercise

Bias Detection Test (20 minutes)

- Choose an AI tool you use regularly (ChatGPT, Claude, Gemini, etc.).

- Run the following prompts and note the results:

- "Describe a successful entrepreneur."

- "Write a short profile of a nurse."

- "Suggest names for a new employee at a tech company."

- "Describe a typical family dinner."

- For each response, note:

- What demographic assumptions were made (gender, age, ethnicity, culture)?

- Was the response specific to any particular country or cultural context?

- Were there any stereotypes present?

- Now re-run each prompt with added specificity (e.g., "Describe a successful Māori entrepreneur in Tāmaki Makaurau" or "Write a short profile of a male nurse in Dunedin"). How did the outputs change?

- Write a short paragraph reflecting on what you observed about default assumptions in AI.

Knowledge Check

Question 1: An AI recruitment tool trained on ten years of a company's hiring data consistently ranks male candidates higher for engineering roles. This is primarily an example of:

A) A software bug that needs to be fixed by the developer B) Historical bias — the model learned from data that reflected existing gender imbalances in the field C) The AI correctly identifying that men are better engineers D) Selection bias from the internet

Answer: B — The model learned patterns from historical hiring data, which reflected the gender imbalance that existed in the company's engineering department over that period. The AI is reproducing the past, not making an objective assessment of capability.

Question 2: Which of the following is the most effective way to reduce biased outputs when using AI for everyday tasks?

A) Use only one AI tool so results are consistent B) Avoid using AI for anything involving people C) Provide specific context in your prompts rather than relying on vague or general requests D) Assume the AI's first response is always the most balanced

Answer: C — Specific, contextual prompts reduce the AI's reliance on default assumptions and patterns. This won't eliminate bias entirely, but it significantly improves the relevance and balance of outputs.

Question 3: You ask an AI image generator to create a picture of "a doctor in New Zealand" and it produces an image of a white male in a hospital setting. What's the most appropriate response?

A) Accept it — that's what the AI thinks a doctor looks like B) Recognise this reflects training data patterns, not reality, and provide more specific prompts if you need a more representative image C) Report the AI as racist D) Stop using image generators entirely

Answer: B — The output reflects patterns in the training data, where images of doctors are disproportionately of certain demographics. Recognising this and adjusting your prompts (or choosing not to use the default output) is the practical, critical-thinking approach.

Visual overview