Module 6, Lesson 5: Workplace Policies and Environmental Considerations

Listen to this lesson

Module 6, Lesson 5: Workplace Policies and Environmental Considerations

Estimated reading time: 7 minutes

What You'll Learn

Using AI at work isn't just about knowing how to prompt. It's about understanding the rules — both the ones your organisation has set and the ones that should exist even if they don't yet. This lesson covers how to navigate AI in a workplace context and introduces a topic most AI courses skip entirely: the environmental cost of running these tools.

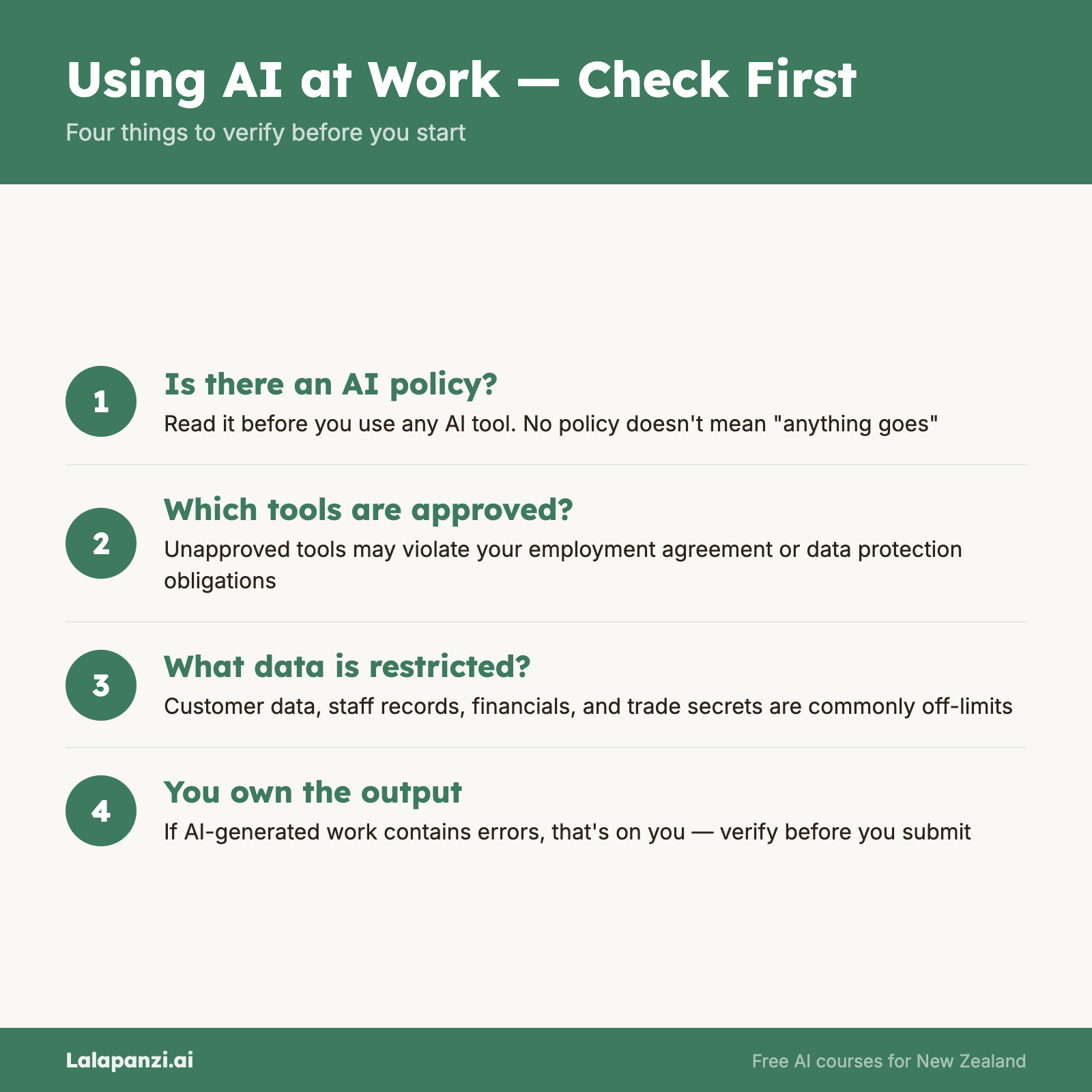

AI at Work: Check Before You Click

Before using AI tools for work, you need to know where your organisation stands. Many workplaces now have AI usage policies — and many more should but don't yet. In 2026, the focus has shifted from whether to use AI to how to do so within a socio-technical framework. This means considering not just the tool and the policy, but the human and social context in which the AI is deployed.

What to Check

What to Check

Does your workplace have an AI policy? This is the first question. If the answer is yes, read it. If the answer is no, that doesn't mean "anything goes" — it means there's a gap you should be cautious about.

Which tools are approved? Some organisations have approved specific AI tools (often enterprise versions with stronger data protection) and prohibited others. Using an unapproved tool might violate your employment agreement, data protection obligations, or industry regulations.

What data can you input? Most workplace AI policies restrict what types of information you can enter into AI tools. Customer data, employee records, financial information, and trade secrets are commonly restricted. Even if there's no explicit policy, the principles from Lesson 1 (privacy) and Lesson 3 (IP) apply.

Do you need to disclose AI use? Some organisations require employees to disclose when AI has been used to produce work. This is particularly common in legal, academic, and regulated industries. Know the expectation before you submit AI-assisted work.

Who is responsible for the output? This is critical: if you use AI to produce something and it contains errors, you are responsible. Not the AI, not the AI provider. You. AI tools don't come with professional indemnity insurance. If you submit a report with a fabricated statistic because you didn't verify the AI's output, that's on you.

If Your Workplace Doesn't Have a Policy

Many organisations — particularly smaller ones — haven't caught up yet. If that's your situation, here are sensible default practices:

- Don't input confidential or personal information into AI tools, especially free-tier tools.

- Use AI as a drafting aid, not a final authority. Review and verify everything.

- Be transparent with your manager about how you're using AI. No one wants to discover a team member has been feeding client data into ChatGPT via a complaint rather than a conversation.

- Suggest a policy. Raising this with leadership isn't just helpful — it shows initiative and awareness. You could even use the exercise at the end of this lesson as a starting point.

Professional and Industry Obligations

Some professions and industries have specific requirements around AI use:

- Legal professionals must ensure AI-generated legal research is verified. Several overseas cases have involved lawyers citing AI-generated case law that didn't exist.

- Healthcare professionals must ensure any AI-assisted clinical decisions comply with relevant standards and that patient data is handled appropriately.

- Financial advisers operate under obligations that require them to provide personalised, considered advice — not AI-generated boilerplate.

- Teachers and academics face evolving expectations around AI use in assessment and course development.

- Public servants may be subject to additional requirements around transparency, data sovereignty, and the use of automated decision-making.

If you're in a regulated profession, check your professional body's current guidance on AI use. Many are updating their positions regularly.

The Environmental Cost of AI

This is the part most AI courses leave out. Running large language models takes significant computational resources, and those resources have real environmental impacts.

The Energy Question

Training a large AI model is enormously energy-intensive. Estimates vary, but training a model like GPT-4 is thought to have consumed the energy equivalent of hundreds of homes running for a year. And that's just training — every time you send a prompt, inference (generating the response) also uses energy, though much less per query.

To put this in context: a single ChatGPT query uses roughly 10 times the energy of a standard Google search. That might sound alarming, but it's worth keeping perspective — it's still a tiny amount of energy in absolute terms. The concern is scale. With hundreds of millions of people using AI tools daily, the aggregate energy consumption is substantial and growing.

Water Usage

Data centres need cooling, and cooling requires water. Microsoft reported a 34% increase in water consumption in 2023, attributed in part to AI workloads. In regions facing water scarcity, this is a meaningful concern.

What This Means for You

This isn't about feeling guilty for using ChatGPT. It's about being an informed user:

Use AI purposefully, not reflexively. If you're asking the AI a question you could answer with a 10-second search, that's an unnecessary use of resources. AI tools are most valuable for tasks where they genuinely save time or improve quality — not as a replacement for basic effort.

Be efficient with your prompts. Well-crafted prompts that get useful results on the first or second try use fewer resources than vague prompts that require ten rounds of back-and-forth. Good prompt engineering isn't just about better results — it's also about efficiency.

Consider the model size. Not every task requires the most powerful (and energy-intensive) model. Many AI providers offer smaller, faster models alongside their flagship ones. Using GPT-4o mini instead of GPT-4o for a simple email draft is both faster and less resource-intensive.

Advocate for transparency. As AI users, we can push providers to be more transparent about the environmental impact of their services. Some companies are already publishing sustainability reports that include AI-related metrics. Supporting and demanding this transparency helps the whole ecosystem improve.

Keeping Perspective

The environmental impact of AI is real and worth taking seriously, but it exists alongside the environmental costs of many other technologies and industries. The internet itself, streaming video, cryptocurrency, air travel — all have significant footprints.

The constructive response isn't to stop using AI. It's to use it thoughtfully, push for greater efficiency and renewable energy in AI infrastructure, and factor environmental considerations into how we evaluate and adopt these tools.

Key Takeaways

- Check your workplace AI policy before using AI tools for work. If one doesn't exist, follow sensible defaults and suggest creating one.

- You are responsible for AI outputs. If you submit AI-assisted work containing errors, the accountability is yours.

- Regulated professions have additional obligations — check your professional body's current AI guidance.

- AI has a real environmental footprint — training and running models consumes significant energy and water.

- Use AI purposefully and efficiently. Good prompts, appropriate model selection, and intentional use reduce both errors and environmental impact.

Practical Exercise

Draft an AI Usage Policy (30 minutes)

Create a simple AI usage policy for a small team (5-10 people) in an organisation of your choice. Your policy should address:

- Approved tools: Which AI tools can the team use? (Pick 2-3 and explain why)

- Data restrictions: What types of information must NOT be entered into AI tools?

- Verification requirements: What level of review is expected before AI-assisted work is submitted or shared?

- Disclosure: When should AI use be disclosed to clients, stakeholders, or managers?

- Responsibility: Who is accountable for the accuracy of AI-assisted outputs?

Keep it to one page. The best policies are short, clear, and practical — not 20-page documents that nobody reads.

Bonus: Share your draft policy with a colleague or manager and ask for their feedback.

Knowledge Check

Question 1: Your workplace doesn't have an AI usage policy. A colleague asks you to paste a spreadsheet of customer names and purchase histories into ChatGPT to help generate a marketing report. What should you do?

A) Go ahead — there's no policy saying you can't B) Decline and explain that sharing identifiable customer data with a third-party AI tool raises privacy and data protection concerns, regardless of whether there's a formal policy C) Do it but delete the conversation afterwards D) Use a different AI tool instead of ChatGPT

Answer: B — The absence of a policy doesn't eliminate your obligations around data protection and privacy. Sharing identifiable customer information with any third-party AI tool is problematic, and you should flag this as a situation that highlights the need for a policy.

Question 2: Which of the following best describes the environmental impact of using AI tools?

A) AI has no significant environmental impact because it's digital B) AI tools consume energy and water through the data centres that run them, with impact scaling with usage volume and model size C) Only training AI models has environmental impact — using them (inference) is essentially free D) AI's environmental impact is so large that individuals should stop using it immediately

Answer: B — Both training and running (inference) AI models consume energy, and the data centres that power them also use significant water for cooling. The impact scales with how many people use the tools and how large the models are.

Question 3: You use AI to draft a client report. The report contains a factual error that leads to the client making a poor business decision. Who is responsible?

A) The AI provider, because their model generated the incorrect information B) No one — errors are an accepted risk of using new technology C) You (and your organisation), because you submitted the work and are accountable for its accuracy D) The client, because they should have verified the information themselves

Answer: C — Professional accountability doesn't transfer to AI tools. When you submit work — whether AI-assisted or not — you and your organisation are responsible for its accuracy. This is why human review and verification are essential.

Visual overview