Module 6, Lesson 4: Accuracy, Verification, and the Human-in-the-Loop Principle

Listen to this lesson

Module 6, Lesson 4: Accuracy, Verification, and the Human-in-the-Loop Principle

Estimated reading time: 8 minutes

What You'll Learn

AI tools are impressively fluent. They produce text that reads well, sounds confident, and is often genuinely helpful. But fluency is not the same as accuracy. One of the most important skills in working with AI is knowing when to trust its outputs and when to verify them — and always keeping a human in the decision-making loop for anything that matters.

The Confidence Problem

Here's the core issue: AI models don't know what they know and don't know. They generate text by predicting the most likely next word based on patterns in their training data. When the training data is rich and consistent on a topic, the AI's output is usually reliable. When the data is sparse, contradictory, or absent, the AI doesn't say "I'm not sure about this." It generates a plausible-sounding response anyway.

This is what people call "hallucination" — the AI producing information that sounds authoritative but is partially or completely fabricated. It might invent a citation that doesn't exist, misattribute a quote, get a date wrong, or confidently state something that contradicts established facts.

The dangerous part is that hallucinated content looks exactly the same as accurate content. There's no formatting difference, no tone shift, no asterisk. The AI delivers a fabricated legal precedent with the same calm confidence as a well-established scientific fact.

When AI Is Most Likely to Get Things Wrong

Understanding the patterns helps you know where to be most careful:

Specific facts and figures. Dates, statistics, addresses, phone numbers, prices — anything precise and verifiable. AI models are trained on data with a cutoff date, and they sometimes blend, misremember, or fabricate specific details.

Recent events. If something happened after the model's training data cutoff, the AI either won't know about it or will extrapolate (guess) based on older patterns. Always check when a model's training data ends.

Niche or specialised topics. The less a topic appears in the training data, the more likely the AI is to fill gaps with plausible-sounding but inaccurate content. This is particularly relevant for New Zealand–specific information, where there's simply less training data available than for US or UK topics.

Citations and references. AI tools are notorious for generating fake academic citations — real-sounding author names, plausible journal titles, and completely fabricated papers. Never cite an AI-suggested reference without verifying it actually exists.

Legal, medical, and financial specifics. AI can provide useful general information on these topics, but the details matter enormously in professional contexts. A small error in a legal clause, drug dosage, or tax calculation can have serious consequences.

Maths and logic. Large language models are fundamentally text predictors, not calculators. They can get basic arithmetic wrong and sometimes produce logically flawed reasoning while presenting it convincingly.

The Human-in-the-Loop Principle

The concept is simple: a human should always be involved in reviewing, validating, and making decisions based on AI outputs. AI generates; humans verify and decide.

This isn't about distrusting AI. It's about using it appropriately — as a powerful tool that augments human judgement rather than replacing it.

Here's what human-in-the-loop looks like in practice:

For Everyday Tasks

When you use AI to draft an email, you read it before sending. You check that the tone is right, the facts are correct, and it says what you actually mean. Most people do this naturally — it's the same instinct that makes you proofread anything before sending it.

For Research and Analysis

When AI provides information, you verify key claims against reliable sources. You don't cite an AI tool as your source — you use it to find leads, then confirm them. If you're writing a report, the AI might help you draft it, but the facts and figures come from verified sources.

For Decision-Making

This is where human-in-the-loop is most critical. AI can summarise data, identify patterns, and present options. But the decision itself — especially when it affects people — should be made by a human who understands the context, can weigh ethical considerations, and is accountable for the outcome.

Consider a manager using AI to help rank job applicants. The AI might score candidates based on CV keywords, but the manager should review the rankings, consider factors the AI might miss (career breaks, non-traditional experience, cultural fit), and make the final call. The AI informs the decision; it doesn't make it.

For High-Stakes Outputs

Anything that will be published, submitted to a client, used in a legal context, or acted upon in a way that affects people's lives or livelihoods needs human review. Full stop. This includes:

- Reports and recommendations

- Client deliverables

- Public communications

- Policy documents

- Financial calculations

- Medical or health-related information

Practical Verification Techniques

Here are specific things you can do to verify AI outputs:

1. Cross-reference key facts. Pick the three or four most important factual claims in any AI output and verify them independently. Use official sources, reputable publications, or domain experts.

2. Check citations. If the AI provides references, search for them. Do they exist? Do they say what the AI claims they say? This takes 60 seconds per citation and can save you significant embarrassment.

3. Ask the AI to show its reasoning. Prompt with "explain your reasoning" or "what are you basing this on?" The response won't tell you if it's hallucinating, but it can reveal logical gaps or circular reasoning.

4. Use multiple tools. Run the same question through different AI models. If they give consistent answers, that increases (but doesn't guarantee) confidence. If they diverge significantly, investigate further.

5. Apply the "would I bet on this?" test. Before acting on any AI-generated information, ask yourself: would I bet real money that this is correct? If the answer is no, verify before proceeding.

6. Check the date. Is the information current? Models have training cutoffs, and the world moves on. Anything time-sensitive — regulations, prices, personnel, organisational details — needs a freshness check.

Building Good Habits

The most effective AI users aren't the ones who blindly trust the tools or the ones who refuse to use them. They're the ones who've built a habit of critical engagement:

- They use AI to accelerate their work, not to replace their thinking.

- They verify anything important before acting on it.

- They know the difference between a first draft (which AI is great at) and a final product (which needs human judgement).

- They're comfortable saying "the AI got this wrong" and correcting it.

This is a skill that improves with practice. The more you use AI tools, the better you get at sensing when something feels off — when a claim is a little too neat, a statistic a little too round, or a recommendation a little too generic.

Trust your instincts. Verify when it matters. Keep humans in the loop.

Key Takeaways

- AI is fluent but not always accurate. It can generate fabricated information with the same confidence as verified facts.

- Hallucination is a fundamental feature, not a bug. It stems from how language models work — predicting likely text rather than retrieving verified information.

- The human-in-the-loop principle means humans always review, verify, and decide — especially for high-stakes outputs.

- Verification is a skill and a habit. Cross-reference key facts, check citations, use multiple tools, and apply common sense.

- AI is a powerful drafting and research tool, but the final product is your responsibility.

Practical Exercise

Accuracy Audit (20 minutes)

- Ask an AI tool to write a 200-word summary of a topic you know well — your industry, your city, a hobby, your profession.

- Read the summary carefully. Highlight or note:

- Any factual errors (dates, names, statistics, claims)

- Any statements that are technically true but misleading

- Any omissions — important things that should have been mentioned but weren't

- Anything that feels "off" even if you can't immediately pinpoint why

- For each error or concern, verify against a reliable source.

- Rewrite the summary yourself, correcting the errors and adding what was missing.

- Reflect: how confident would you have been in the original summary if it had been about a topic you didn't know well? What does this tell you about using AI outputs on unfamiliar subjects?

Knowledge Check

Question 1: An AI tool provides you with a statistic: "According to a 2024 University of Auckland study, 73% of NZ small businesses use AI tools regularly." You should:

A) Include it in your report — the AI cited a specific source so it must be accurate B) Search for the specific study to verify it exists and actually states this figure before using it C) Round it to "about 70%" to be safe D) Assume it's wrong because AI always makes up statistics

Answer: B — AI tools frequently generate plausible-sounding but fabricated citations and statistics. The only way to know if this is real is to verify it. The study may not exist at all, or it may exist but state a different figure.

Question 2: The human-in-the-loop principle means:

A) A human should type the prompts and an AI should make the decisions B) A human should always be involved in reviewing AI outputs and making final decisions, especially for high-stakes situations C) AI tools should never be used without a human watching the screen at all times D) Only humans trained in AI should be allowed to use AI tools

Answer: B — Human-in-the-loop means that AI assists and informs, but humans review, verify, and make the final decisions. This is especially critical when outcomes affect people, money, or organisational reputation.

Question 3: Which type of content is AI MOST likely to get wrong?

A) A general explanation of how photosynthesis works B) A specific recent statistic about New Zealand's AI adoption rate C) A summary of a well-known historical event D) A list of common cooking ingredients

Answer: B — Specific, recent, and niche content is where AI is most prone to errors. NZ-specific statistics are niche (less training data), specific (exact figures), and potentially recent (after the training cutoff). General, well-established topics are much less likely to contain errors.

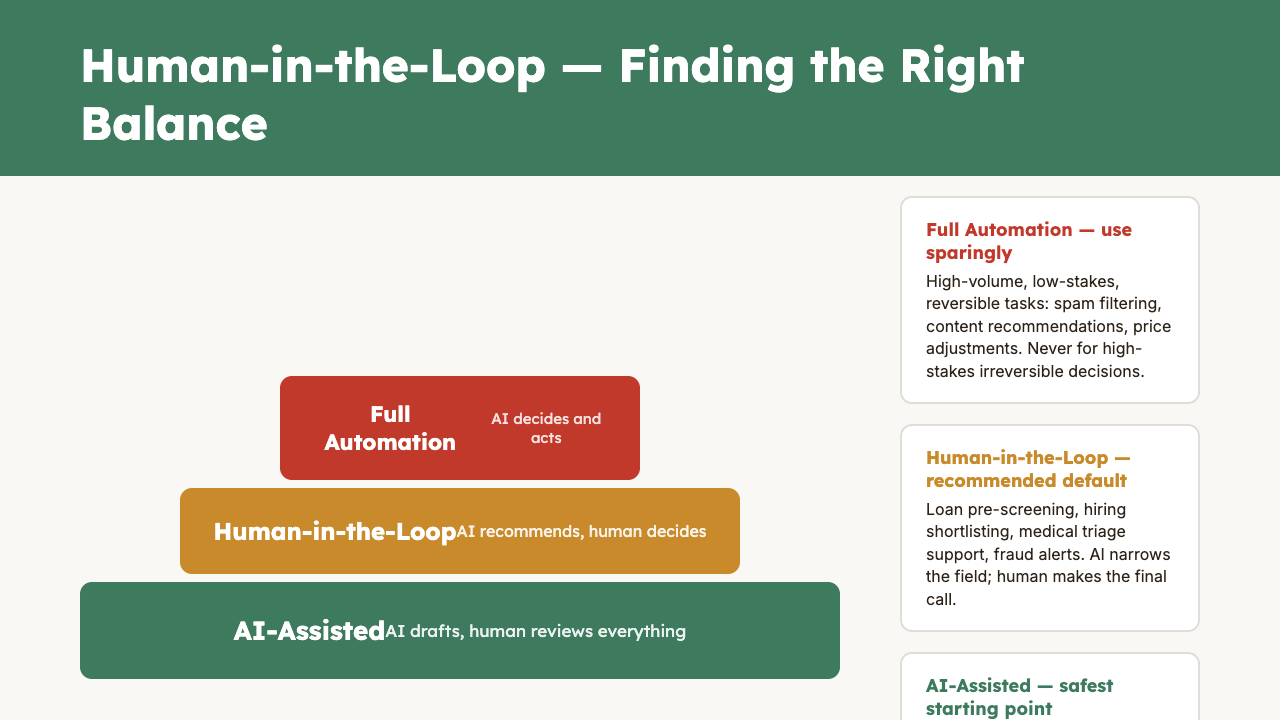

Visual overview