Lesson 5: How to Stay Current

Listen to this lesson

Lesson 5: How to Stay Current

Reading time: 7 minutes

Introduction

Here's the honest truth about AI models: they change constantly. A model that launched three months ago might already have a newer, better version. New competitors appear regularly. Pricing shifts. Features get added, removed, or renamed.

This can feel overwhelming — like you'll never catch up. But here's the good news: you don't need to track everything. You just need a simple system for staying informed enough to make good decisions, without letting AI news consume your life.

This lesson gives you that system.

Why Staying Current Matters (But Not Too Much)

There's a real cost to being out of date. If you're still using a model from a year ago without checking alternatives, you might be getting worse results, paying more than you need to, or missing features that would save you hours.

But there's also a cost to over-following AI news. The space is noisy. Every week brings breathless announcements about the "next big thing." Most of it won't affect your day-to-day use at all.

The sweet spot is checking in regularly — not obsessively — and knowing what actually matters to you.

What to Watch (And What to Ignore)

Worth Paying Attention To

Worth Paying Attention To

New model releases from the major providers. When OpenAI, Anthropic, Google, or Meta release a new model, it's worth a quick look. This includes keeping an eye on Llama 4 and the increasing parity of models like DeepSeek with top-tier commercial offerings. These releases can meaningfully change what's available to you. You don't need to understand every technical detail — just know what's new and whether it affects your use cases.

Pricing changes. AI pricing shifts regularly. A model that was expensive six months ago might now have a cheaper tier. Free tiers expand and contract. If cost matters to you (and it should), keep a loose eye on this.

New features in tools you use. If you use ChatGPT regularly, it's worth knowing when they add new capabilities. Same for Claude, Gemini, or any other tool in your workflow. These features can genuinely improve how you work.

Major shifts in capability. Occasionally, a model will make a genuine leap — handling images when it couldn't before, or becoming dramatically better at reasoning. These shifts are worth knowing about.

Safe to Ignore

Benchmark wars. AI companies love publishing benchmarks showing their model is "best." Most benchmarks measure narrow technical performance that doesn't translate directly to your experience. Don't choose a model based on benchmark scores alone.

Rumours and speculation. "I heard GPT-5 is coming next month and it'll be able to..." Ignore this until there's an actual release you can use.

Every new startup. Dozens of new AI tools launch every week. Most won't last. Wait until something has proven useful to real people before investing your time.

Drama and controversy. AI Twitter (and the broader tech media) loves drama — leadership changes, company politics, philosophical debates. Interesting if you enjoy it, but irrelevant to choosing and using models well.

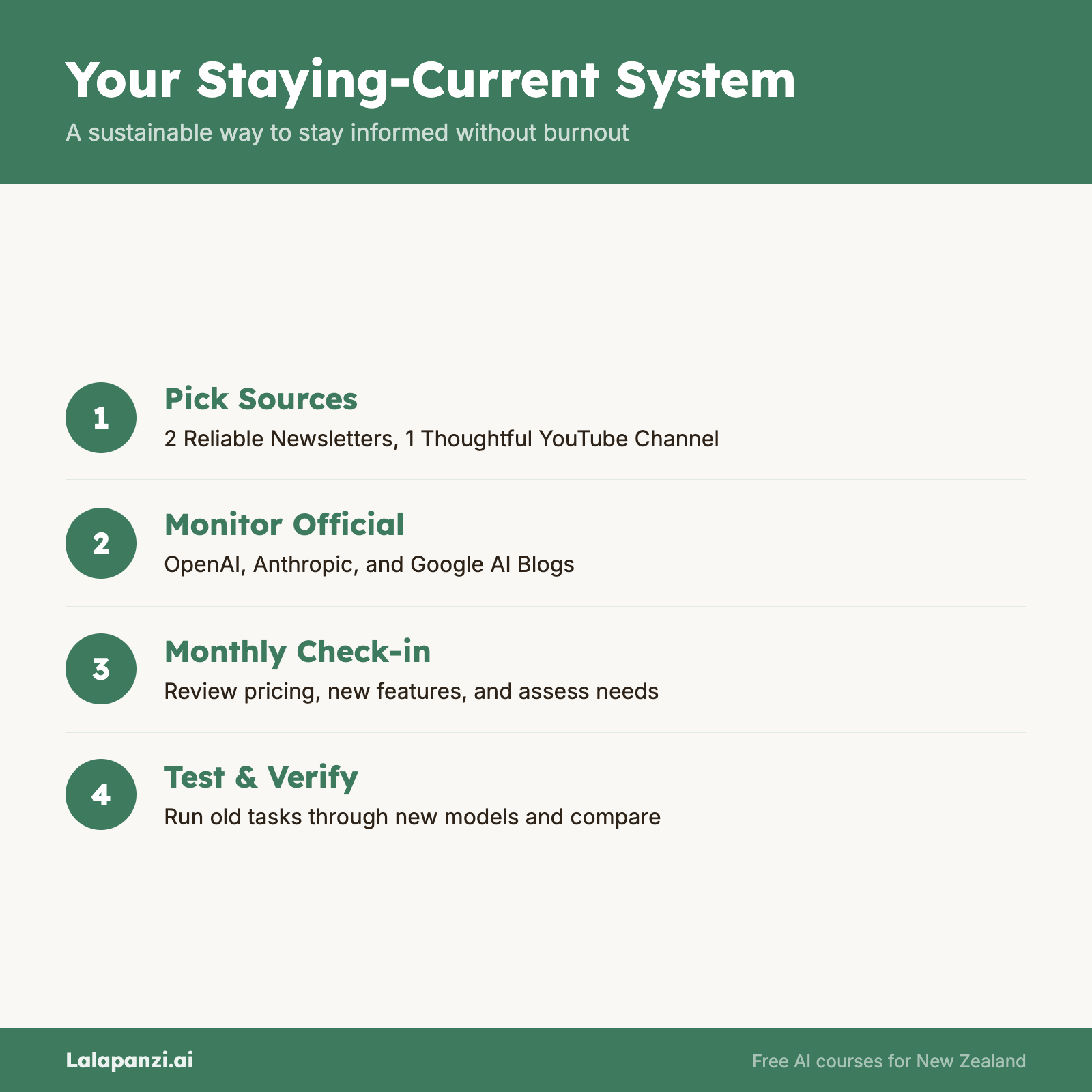

Your Staying-Current System

Here's a practical system that takes about 15–20 minutes per week:

1. Pick Two Reliable Sources

You don't need ten newsletters and five podcasts. Pick two sources that give you a good overview and stick with them.

Good options:

- The Rundown AI — A daily newsletter that summarises AI news in plain language. Quick to scan, covers the important stuff.

- TLDR AI — A concise daily newsletter covering AI research, tools, and industry news. Quick to read and well-organised.

- AI Explained (YouTube) — Thoughtful video breakdowns of major developments, without the hype.

- Ars Technica or The Verge AI coverage — Mainstream tech publications that cover major AI news with some editorial judgement about what actually matters.

Pick two. Skim them weekly. That's enough.

2. Follow the Official Blogs

Bookmark the blogs of the models you actually use:

- OpenAI Blog (openai.com/blog) — ChatGPT and GPT updates

- Anthropic Blog (anthropic.com/blog) — Claude updates

- Google AI Blog (blog.google/technology/ai) — Gemini updates

You don't need to read every post. Just check in when you hear about a new release, or glance through once a month.

3. Set a Monthly "Model Check-In"

Once a month, spend 15 minutes asking yourself:

- Has my main model released an update?

- Has pricing changed for any model I use?

- Is there a new feature I should try?

- Have I heard about a model that might be better for something I do regularly?

If the answer to all four is "no" or "not sure," that's fine. Move on. If something catches your eye, spend another 15 minutes exploring it.

4. Test, Don't Just Read

When you do hear about something new, the best way to evaluate it is to try it yourself. Take a task you've done recently, run it through the new model, and compare the output to what you got before.

Five minutes of testing tells you more than five articles about benchmark scores.

Communities Worth Knowing About

If you want to go slightly deeper, communities can be helpful. But be selective — AI communities range from genuinely useful to pure noise.

Reddit's r/artificial and r/ChatGPT — Large communities with a mix of news, tips, and discussion. Sorting by "top this week" filters out most of the noise.

Specific tool communities — Many AI tools have their own Discord servers or forums. These can be goldmines for practical tips from other people using the same tools you are.

Local meetups — If you're in a larger city, there may be AI-focused meetups. These tend to be more practical and grounded than online discussions. In Aotearoa New Zealand, check Meetup.com for AI groups in your area.

A word of caution: online AI communities can be echo chambers. People who spend all day discussing AI tend to overestimate how quickly things change and how urgently you need to keep up. Take the practical tips, leave the anxiety.

What "Staying Current" Actually Looks Like

Let's be realistic about what this means in practice:

Week to week: You skim a newsletter or two. Most weeks, nothing changes for you. That's normal and fine.

Month to month: You do a quick check-in. Maybe one month you discover a new feature that helps you, or you notice a price drop. Most months, you confirm you're on track and move on.

Quarter to quarter: You might switch a model or try a new tool. Or you might not. The goal is informed decisions, not constant change.

Year to year: Looking back, you'll notice the landscape has shifted meaningfully. But because you've been checking in regularly, none of it will feel like a surprise.

The people who struggle are those who either ignore AI completely for a year and then feel hopelessly behind, or those who try to follow every development daily and burn out. The middle path — regular, brief check-ins — is where you want to be.

Key Takeaways

- AI models change frequently, but you don't need to track everything. A simple system beats obsessive following.

- Focus on what affects you: new releases from models you use, pricing changes, and new features. Ignore benchmarks, rumours, and drama.

- Two good sources and a monthly check-in is enough to stay informed without it becoming a second job.

- Test, don't just read. Five minutes trying a new model tells you more than five articles about it.

- Communities can help, but be selective. Take the practical tips, leave the anxiety about keeping up.

Exercise: Set Up Your Staying-Current System

Right now, spend 10 minutes doing the following:

- Choose two sources from the list above (or find your own). Subscribe, bookmark, or follow them.

- Bookmark the official blog for your main AI model.

- Set a recurring monthly reminder (calendar event, phone reminder, whatever works for you) that says: "AI Model Check-In — 15 minutes."

- Write down three tasks you do regularly with AI. These are your benchmarks — when you hear about a new model, you'll test it against these tasks.

That's it. Your system is now in place. It'll take you 15–20 minutes per week at most, and you'll always know enough to make good choices.

Quiz

1. What's the most practical way to evaluate a new AI model you've heard about?

a) Read benchmark comparisons published by the model's creators

b) Wait for expert reviews on YouTube before trying it

c) Run a task you've done before through the new model and compare the output

d) Check how many people are talking about it on social media

Answer: c) Your own experience with your own tasks is the most reliable way to evaluate whether a new model is useful to you. Benchmarks and reviews can be helpful starting points, but hands-on testing is what matters.

2. How often should most people check in on AI model developments?

a) Daily — the space moves too fast to miss anything

b) Weekly skim of one or two sources, plus a monthly deeper check-in

c) Only when something stops working

d) Every six months is plenty

Answer: b) A weekly skim keeps you aware of major developments, and a monthly check-in ensures you're not missing features or savings relevant to your work. Daily tracking leads to burnout; waiting too long leads to falling behind.

3. Which of the following is generally safe to ignore when staying current with AI?

a) New model releases from OpenAI, Anthropic, or Google

b) Pricing changes for models you use

c) Benchmark score comparisons between competing models

d) New features added to tools in your workflow

Answer: c) Benchmark scores measure narrow technical performance and often don't reflect real-world usefulness. New releases, pricing changes, and feature updates all directly affect your experience and are worth tracking.

Visual overview