A Plain-Language Definition of AI (And What AI Is Not)

Listen to this lesson

Lesson 1.1 — A Plain-Language Definition of AI (And What AI Is Not)

Estimated reading time: 7 minutes

What is artificial intelligence, really?

Let's start with the simplest honest definition we can manage:

Artificial intelligence is software that can perform tasks which normally require human thinking.

That's it. No mysticism, no science fiction. AI is software — code running on computers — that can do things like recognise faces in photos, translate languages, predict what you might type next, or write a paragraph about New Zealand's housing market.

The word "intelligence" is doing a lot of heavy lifting in that definition, and it's worth pausing on. When we say a piece of software is "intelligent," we don't mean it thinks the way you do. We don't mean it has feelings, opinions, or awareness. We mean it can take in information, process it, and produce a useful output — in ways that used to require a human brain.

Your phone's autocomplete is AI. It predicts what word you'll type next based on patterns it's learned from millions of sentences. It doesn't understand your message. It doesn't care about your message. But it does something that looks a lot like understanding — and that's enough to be genuinely useful.

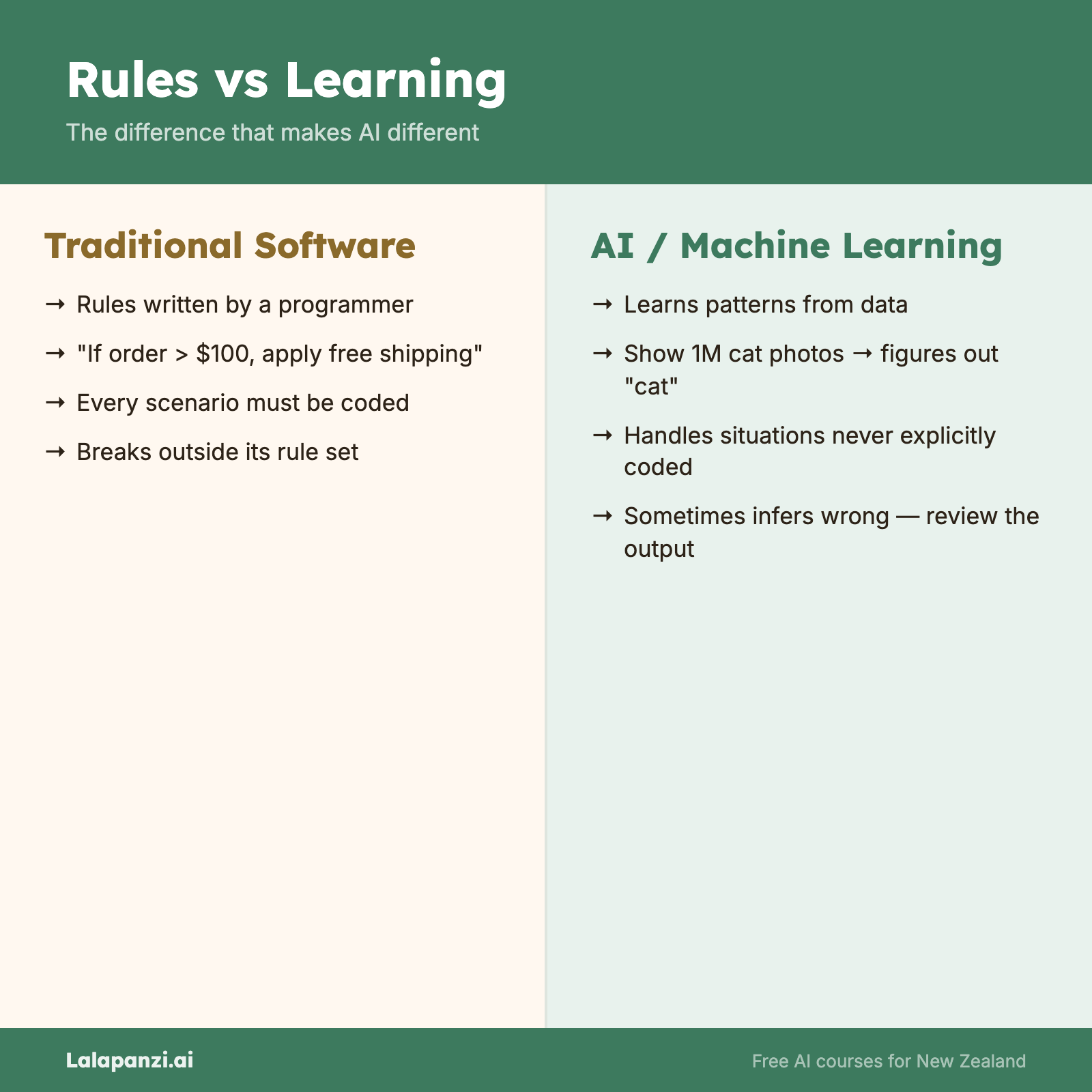

The key distinction: rules vs learning

Traditional software follows rules that a programmer writes out explicitly. "If the customer's order is over $100, apply free shipping." Every scenario has to be anticipated and coded for.

AI works differently. Instead of being told every rule, AI systems learn patterns from large amounts of data. Show an AI system a million photos labelled "cat" and a million labelled "not cat," and it figures out for itself what makes a cat a cat. Nobody programmes in "look for pointy ears and whiskers." The system discovers those patterns on its own.

This is what makes AI both powerful and sometimes unpredictable. It wasn't told the rules — it inferred them. And sometimes it infers wrong.

What AI is not

This matters just as much as the definition. AI is surrounded by misconceptions, and clearing them up now will save you a lot of confusion later.

AI is not a brain. It doesn't think. It doesn't ponder. It processes inputs and produces outputs based on statistical patterns. When ChatGPT writes a thoughtful-sounding paragraph, it's producing the sequence of words that is statistically most likely to follow, given the input. That's remarkable — but it's not thinking.

AI is not conscious. No current AI system has awareness, feelings, desires, or experiences. When an AI chatbot says "I think..." or "I feel...," it's using language patterns it learned from human text. There's nobody home.

AI is not magic. Every AI system has specific capabilities and specific limitations. It runs on hardware, consumes electricity, and was built by people making engineering decisions. Understanding this keeps you grounded when the hype gets loud.

AI is not one thing. "AI" is an umbrella term covering dozens of different technologies and approaches. The AI that recommends your next Netflix show is completely different from the AI that helps doctors spot tumours in X-rays. Lumping them together is like saying "vehicles" without distinguishing between a bicycle and a container ship.

AI is not new. The term "artificial intelligence" was coined in 1956. Spam filters have used AI techniques for over twenty years. What's new is the scale and capability of recent systems — particularly large language models like ChatGPT and Claude. But the field itself has been around for decades.

Where you're already using AI

You almost certainly interact with AI multiple times a day without thinking about it:

- Email spam filters — AI decides which emails go to junk

- Navigation apps — Google Maps and Apple Maps use AI to predict traffic and suggest routes

- Social media feeds — AI decides what posts you see and in what order

- Online banking — AI flags unusual transactions as potential fraud

- Voice assistants — Siri, Alexa, and Google Assistant use AI to understand your speech

- Streaming recommendations — Netflix, Spotify, and YouTube use AI to suggest what you might enjoy

- Phone cameras — AI enhances your photos, blurs backgrounds, and adjusts lighting

None of these feel like "artificial intelligence" because they're woven into products you already use. That's actually a good sign — it means AI is most useful when it quietly makes things work better.

The current moment

What's changed recently — and why everyone's suddenly talking about AI — is the arrival of large language models (LLMs) that can have conversations, write content, answer questions, and assist with complex tasks in plain English.

Tools like ChatGPT, Claude, and Gemini have made AI accessible to anyone who can type a sentence. You don't need to code. You don't need a technical background. You just need to know how to ask for what you want — and how to evaluate what you get back.

That's what this course is for. We're going to build your understanding from the ground up, practically and honestly. By the end, you'll know what AI can do, what it can't, and how to use it well.

Let's keep going.

Key Takeaways

- AI is software that performs tasks normally requiring human thinking — not a brain, not magic, not conscious

- AI learns patterns from data rather than following explicitly programmed rules

- You already use AI daily — in your phone, email, maps, banking, and streaming services

- "AI" covers many different technologies — it's an umbrella term, not a single thing

- The recent breakthrough is accessibility — large language models let anyone interact with AI using plain language

Practical Exercise

Identify AI in your day.

Over the next 24 hours, pay attention to the digital tools and services you use. Make a list of at least five that you think use AI in some way. For each one, write down:

- What the tool/service is

- What you think the AI is doing (e.g., "predicting what I want to watch next")

- Whether it's doing something that would otherwise require a human

Don't worry about getting the technical details right — the point is to start noticing where AI already shows up in your life. You might be surprised how long your list gets.

Knowledge Check

1. What is the best plain-language definition of AI?

a) A computer that thinks and feels like a human

b) Software that can perform tasks which normally require human thinking

c) A robot with a brain

d) Any computer programme that runs automatically

Answer: b) AI is software that handles tasks which would normally need human cognition — but it doesn't think or feel.

2. How does AI differ from traditional software?

a) AI is always more accurate

b) AI runs on special computers

c) AI learns patterns from data rather than following explicitly written rules

d) AI doesn't need electricity

Answer: c) Traditional software follows hand-coded rules. AI systems learn patterns from data.

3. Which of the following is TRUE about current AI?

a) AI systems are conscious but not as smart as humans

b) AI is a brand-new technology invented in the 2020s

c) AI is an umbrella term covering many different technologies and approaches

d) AI can only be used by people with programming skills

Answer: c) "AI" covers a wide range of technologies. It's been around since the 1950s, it's not conscious, and modern tools like ChatGPT require no coding.

Visual overview