Understanding vs Pattern Matching

Listen to this lesson

Lesson 2.5 — Understanding vs Pattern Matching

Estimated reading time: 8 minutes

The question at the heart of AI

Does AI understand what it's doing?

This isn't just a philosophical question for academics. It's a practical question that affects how you use AI every day. If AI genuinely understands your request, you can trust it like a knowledgeable colleague. If it's matching patterns without understanding, you need to treat it differently — more carefully, more critically, with different expectations.

The honest answer is nuanced, and getting it right makes you a better AI user.

What pattern matching looks like

Let's start with what we know for certain. Large language models are, at their core, extraordinarily sophisticated pattern-matching systems. During training, they processed billions of examples of human language and encoded the statistical relationships between words, phrases, and ideas.

When you ask an LLM a question, it doesn't "think about" the answer. It generates a response that statistically fits the pattern of "good answer to this type of question," based on everything it learned during training.

Here's a concrete example. Ask a language model: "What is the capital of New Zealand?"

The model has seen this question — or very similar ones — thousands of times in its training data, paired with the answer "Wellington." The statistical association between "capital of New Zealand" and "Wellington" is extremely strong. So it produces "Wellington."

Is that understanding? Or is it the world's most sophisticated pattern-matching, connecting a question pattern to an answer pattern?

Now try something harder: "If New Zealand moved its capital to a city that begins with the same letter as its largest city, what would the new capital be?"

This requires connecting several pieces of information (largest city = Auckland, starts with "A", cities starting with "A" in NZ) and reasoning through them. Modern LLMs can often handle this kind of question. But are they reasoning, or have they seen enough similar puzzles in training data to pattern-match their way to the answer?

The Chinese Room argument

In 1980, philosopher John Searle proposed a thought experiment called the Chinese Room that remains relevant today.

Imagine you're locked in a room. People slide Chinese characters under the door. You don't speak Chinese, but you have an enormous rulebook that tells you: "When you see these characters, respond with those characters." You follow the rules perfectly. From outside, it looks like someone in the room speaks Chinese fluently.

But do you understand Chinese? No. You're manipulating symbols according to rules without any comprehension of what they mean.

Searle argued that this is what computers do — manipulate symbols without understanding. Critics have offered various counter-arguments (perhaps the system as a whole understands, even if the person inside doesn't). The debate continues.

The analogy to modern LLMs isn't perfect — LLMs are more flexible than a fixed rulebook — but the core question is the same: can a system that processes statistical patterns in text be said to understand the text?

Where pattern matching breaks down

The most revealing way to assess whether AI understands or pattern-matches is to look at where it fails. A system that genuinely understands would fail differently from one that's matching patterns.

Spatial reasoning: Ask an LLM to work through a physical puzzle — like arranging objects in a room — and it often struggles. Humans can visualise this effortlessly because we understand physical space. LLMs have only seen text descriptions of spatial arrangements, so they're working with patterns in language, not spatial understanding.

Novel situations: Present a problem that's genuinely unlike anything in the training data, and performance drops. A system that understands underlying principles should be able to apply them to new situations. A pattern-matching system struggles when the patterns aren't there.

Logical consistency over long reasoning chains: LLMs can appear to reason well over short chains but sometimes lose logical consistency over longer ones — making a claim in paragraph two that contradicts paragraph eight. A system that understood its own argument wouldn't do this.

Common sense about the physical world: "If I put a cup of water upside down on a table, what happens?" Obvious to any human. LLMs sometimes get this right, sometimes don't — depending on how closely the question matches training patterns.

These failure modes suggest pattern matching rather than deep understanding. But it's not entirely clear-cut, which brings us to...

The grey area

Here's where intellectual honesty requires us to acknowledge uncertainty.

Modern LLMs do things that are hard to explain with simple pattern matching:

- Transfer across domains — applying concepts from one field to another in ways that seem creative

- Analogy and metaphor — generating novel comparisons that are genuinely insightful

- Emergent capabilities — doing things they weren't explicitly trained to do, which appear at scale

- Contextual adaptation — adjusting their communication style based on subtle cues in ways that feel perceptive

Are these evidence of a form of understanding? Or are they just what very good pattern matching looks like when you have enough parameters and enough training data?

The honest answer: we don't know. This is one of the most active debates in AI research and philosophy of mind. There's no consensus.

Why it matters practically

You don't need to resolve the philosophical debate to use AI well. What you need is a practical framework:

Treat AI as if it doesn't understand — even if it might

This is the safer approach, and it costs you nothing. If you assume the model is pattern matching:

You'll provide more context. Instead of assuming the AI grasps your situation, you'll spell it out. This consistently produces better results.

You'll verify outputs. Instead of trusting that the AI understood and therefore got it right, you'll check. This catches errors that would otherwise slip through.

You'll use it for its strengths. Pattern matching is phenomenal for: generating drafts, reformatting text, summarising documents, brainstorming options, translating languages, explaining concepts. These tasks don't require understanding — they require excellent pattern completion.

You'll avoid relying on it for its weaknesses. Tasks requiring genuine reasoning, causal understanding, or real-world knowledge verification are where pattern matching (without understanding) falls short. You'll keep humans in the loop for these.

The practical difference in action

Scenario: You ask an AI to review a contract for legal issues.

If you assume it understands: You might trust its analysis and act on it directly. Risky — because it may have matched the pattern of "contract review language" without truly understanding the legal implications.

If you assume it pattern-matches: You treat its output as a first pass — a useful draft that highlights things worth looking at. You then have a qualified human (a lawyer) review the flagged items. Much safer, and still saves time.

The second approach loses almost nothing in efficiency but gains enormously in reliability. That's the sweet spot.

The evolving landscape

It's worth noting that AI capabilities are advancing rapidly. What can't be done today may be possible next year. The line between pattern matching and understanding — if there is a clear line — may shift.

This course teaches you principles that hold regardless of where that line sits. Whether a future AI system truly understands or just pattern-matches so well that the distinction becomes meaningless, the practical advice remains the same: provide clear context, verify important outputs, and keep human judgement in the loop.

The technology will change. The habit of thinking critically about AI outputs will serve you forever.

Key Takeaways

- LLMs are sophisticated pattern-matching systems — they generate statistically probable text based on training data patterns

- Whether this constitutes "understanding" is genuinely debated — and there's no scientific consensus yet

- Pattern matching fails in predictable ways — spatial reasoning, novel situations, long logical chains, and physical common sense

- The safe practical approach: assume pattern matching — provide context, verify outputs, and keep humans in the loop

- This framework works regardless of future AI advances — critical thinking about AI outputs is a permanent skill

Practical Exercise

Probe the boundary between understanding and pattern matching.

Try these experiments with an AI chatbot and observe what happens:

-

The reversal test: Ask the AI to list the months of the year. Then ask it to list them in reverse order. Then ask it to list every third month, starting from February. Note where it succeeds and where it stumbles.

-

The novel analogy: Ask the AI to explain how a central bank's interest rate decisions are like adjusting the thermostat in a house. Then ask it for a completely novel analogy for the same concept that it has probably never encountered: "Explain interest rate decisions as if they were a shepherd managing a flock on a hillside." Compare the quality. Can it generate genuinely novel analogies, or does it fall back on familiar patterns?

-

The consistency test: Ask the AI to create a fictional character with 10 specific traits. Then ask it a series of questions about that character's likely behaviour. Does it remain consistent with the traits it established? Where does it contradict itself?

-

The common sense test: Ask a series of physical-world questions: "Can you fit a piano through a standard doorway?" "If I drop a feather and a bowling ball from a building, which lands first?" "If I freeze a glass of water, will it still fit in the same glass?"

Write a short reflection: Based on your experiments, does the AI seem to understand, or does it seem to pattern-match? Where did you see the clearest evidence either way?

Knowledge Check

1. What is the Chinese Room thought experiment about?

a) A computer that can only operate in China

b) Whether a system can manipulate symbols correctly without actually understanding them

c) A room designed to test AI hardware

d) Teaching AI to read Chinese characters

Answer: b) The Chinese Room argues that a system can produce correct outputs by following rules — without any understanding of what the symbols mean. It raises the question of whether AI processes language without truly understanding it.

2. Why does treating AI as a pattern-matching system lead to better practical outcomes?

a) Because it means you never use AI

b) Because it leads you to provide more context, verify outputs, and keep human judgement in the loop

c) Because pattern matching is always wrong

d) Because it makes AI respond faster

Answer: b) Assuming pattern matching encourages you to be more explicit, verify results, and maintain human oversight — all of which improve the quality and safety of AI-assisted work.

3. In which type of task are LLMs MOST reliable?

a) Tasks requiring genuine causal reasoning about novel situations

b) Tasks requiring spatial reasoning and physical world understanding

c) Tasks like drafting text, summarising, and reformatting — where excellent pattern completion is what's needed

d) Tasks requiring long chains of logically consistent reasoning

Answer: c) LLMs excel at language tasks like drafting, summarising, translating, and reformatting — where sophisticated pattern completion produces excellent results without requiring deep understanding.

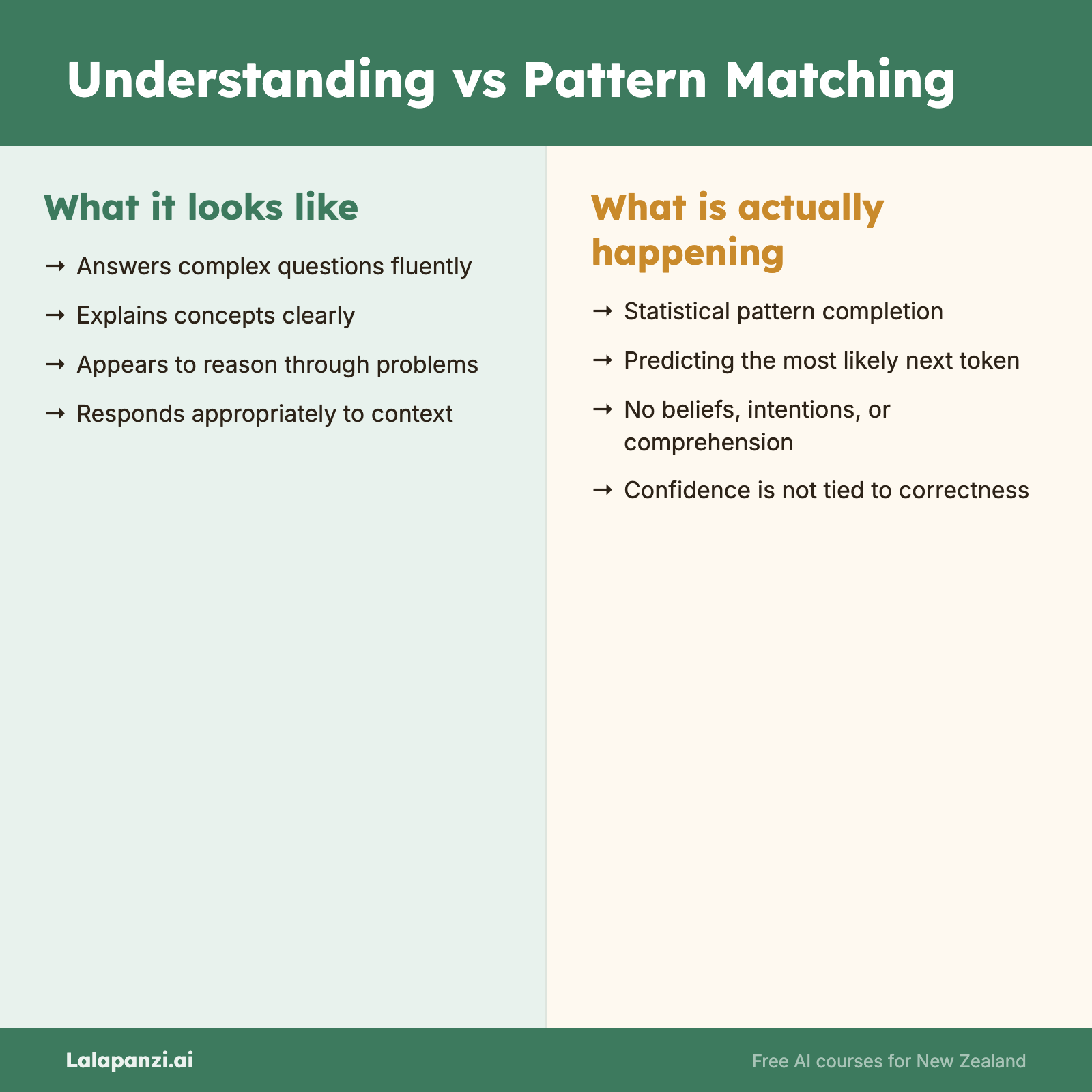

Visual overview