Tokens, Context Windows, and Parameters in Practice

Listen to this lesson

Lesson 2.3 — Tokens, Context Windows, and Parameters in Practice

Estimated reading time: 8 minutes

Three numbers that shape your AI experience

When people compare AI models, three terms come up constantly: tokens, context windows, and parameters. They sound technical, but they directly affect what you can do with AI tools — how long your conversations can be, how capable the model is, and how much things cost.

Let's make these concrete.

Tokens: The units of AI language

We touched on tokens in Lesson 2.1. Now let's go deeper, because tokens affect almost everything about how you use AI.

A token is a small piece of text — roughly three-quarters of a word on average in English.

Here are some examples:

| Text | Approximate Tokens |

|---|---|

| "Hello" | 1 token |

| "Good morning" | 2 tokens |

| "Artificial intelligence" | 2-3 tokens |

| "The quick brown fox jumps over the lazy dog" | 9 tokens |

| A typical email (150 words) | ~200 tokens |

| This entire lesson | ~2,500 tokens |

| A 300-page novel | ~100,000 tokens |

The model doesn't process whole words — it works with these smaller chunks. Common words like "the" are single tokens. Less common words get broken into pieces: "tokenisation" might become "token" + "isation". Numbers, punctuation, and spaces are also tokens.

Why tokens matter to you:

- Pricing — AI services charge by the token, both for your input (the prompt) and the output (the response). Longer conversations cost more.

- Speed — More tokens means more processing time. A short question gets a faster response than a long document.

- Limits — There's a maximum number of tokens a model can handle at once, which brings us to...

Context windows: How much the AI can "see"

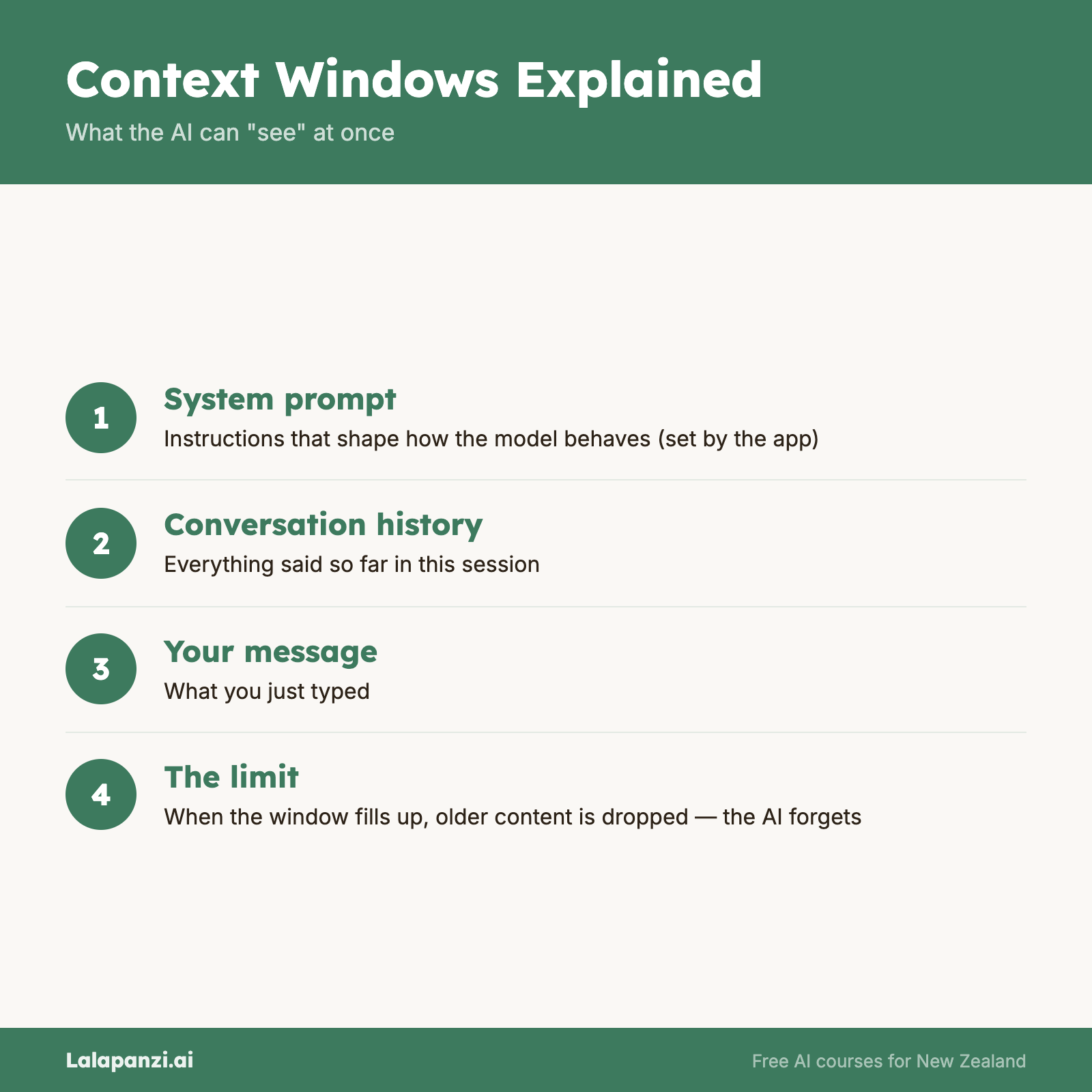

The context window is the total amount of text — measured in tokens — that the model can process at once. This includes your message, any conversation history, system instructions, AND the model's response.

Think of it like a desk. The context window is the size of the desk. Everything the AI needs to work with — your question, the background information, the conversation so far, and the response it's writing — all needs to fit on that desk. When the desk is full, something has to fall off.

Context window sizes have grown dramatically:

| Model (approximate) | Context Window |

|---|---|

| Original GPT-3 (2020) | ~4,000 tokens (~3,000 words) |

| GPT-4 (2023) | 8,000–128,000 tokens |

| Claude Sonnet 4.6 (2025) | 200,000 tokens (~150,000 words) |

| Some 2025-2026 models | 1,000,000+ tokens |

A 200,000-token context window means you can paste in a short novel and ask questions about it. A 4,000-token window meant you could barely fit a long email thread.

Why context windows matter to you:

- Long documents — If you want to analyse a report, contract, or research paper, it needs to fit within the context window

- Conversation memory — The AI remembers your conversation only as far as the context window allows. In a very long conversation, earlier messages may drop off

- Complex tasks — Detailed instructions, examples, and background information all consume context. More context means you can give the model more to work with

A common frustration explained: Have you ever had a conversation with an AI where it seemed to "forget" something you told it earlier? That's often because the earlier part of the conversation has fallen outside the context window. The model isn't being careless — it literally can't see that information anymore.

Parameters: The model's capability

Parameters are the internal settings of a model that were adjusted during training. More parameters generally means a more capable model — one that can represent more complex patterns and produce more nuanced responses.

Think of parameters as the model's capacity for learning. A model with more parameters has more "room" for subtle patterns, more nuance in its representations, and generally performs better on complex tasks.

Some rough parameter counts:

| Model | Approximate Parameters |

|---|---|

| GPT-2 (2019) | 1.5 billion |

| GPT-3 (2020) | 175 billion |

| GPT-4 (2023) | Estimated 1+ trillion |

| Llama 3 (2024) | 8–405 billion (different versions) |

More parameters doesn't always mean better. A well-trained smaller model can outperform a poorly trained larger one on specific tasks. And larger models are more expensive to run, which means higher costs for users.

Why parameters matter to you:

- Model selection — When choosing between different models (e.g., GPT-4 vs a lighter model), parameter count gives you a rough sense of capability. Bigger models tend to handle complex, nuanced tasks better.

- Cost trade-offs — More parameters = more computing power = higher cost. For simple tasks, a smaller model might be perfectly adequate and much cheaper.

- Speed trade-offs — Larger models can be slower to respond. If you need quick answers to simple questions, a lighter model might be a better choice.

Putting it all together: A practical example

Let's say you want to use an AI to help you review a 50-page report and write a summary.

Tokens: The report is about 50,000 tokens. Your instructions add another few hundred. The summary might be 1,000 tokens. Total: roughly 51,000 tokens.

Context window: You need a model with a context window of at least 51,000 tokens. An older model with a 4,000-token window simply can't do this. A modern model with 200,000 tokens can handle it easily.

Parameters: A larger model will likely produce a more nuanced, accurate summary. A smaller model might miss subtleties or oversimplify complex sections.

Cost: At typical API pricing, processing 51,000 input tokens and generating 1,000 output tokens might cost between $0.05 and $1.00 NZD, depending on the model. Using a consumer chatbot (ChatGPT Plus, Claude Pro), it's included in your subscription.

Practical tips

Managing tokens:

- Be concise in your prompts — unnecessary words cost tokens

- For long documents, consider breaking them into sections rather than processing all at once

- Ask for shorter responses when you don't need detail

Working with context windows:

- Start new conversations for new topics — don't let unrelated context clutter the window

- If the AI seems to have forgotten something, it may have fallen out of context — re-state the key information

- For very long documents, some tools offer document upload features that handle context management for you

Choosing models:

- Use larger, more capable models for complex analysis, nuanced writing, and difficult reasoning

- Use smaller, faster models for simple tasks: quick questions, reformatting, basic translation

- Don't pay for more capability than you need

Key Takeaways

- Tokens are the units AI works in — roughly ¾ of a word each — and affect pricing, speed, and limits

- The context window is the total text the model can handle at once — your input, conversation history, and the response all share this space

- Parameters represent the model's capacity — more parameters generally means more capable but also more expensive

- These three numbers directly shape your practical experience — from what tasks are possible to what they cost

- Match the model to the task — use powerful models for complex work, lighter ones for simple tasks

Practical Exercise

Explore tokens, context, and model differences.

-

Counting tokens: Go to a free tokeniser tool (search for "OpenAI tokenizer" online). Paste in a paragraph from a recent email or document you've written. How many tokens is it? Try the same text in te reo Māori or another non-English language — notice how the token count changes? Non-English text often uses more tokens per word.

-

Testing context limits: Start a conversation with an AI chatbot. Share a detailed list of 10 specific facts about yourself (make them up if you prefer — favourite colour, pet's name, etc.). Continue the conversation for 20-30 messages on various topics. Then ask the AI to recall all 10 facts. How many does it remember? This shows context window behaviour in practice.

-

Comparing models: If you have access to multiple AI tools (even free tiers), ask both the same complex question — something that requires nuance, like "What are the arguments for and against a four-day work week in New Zealand?" Compare the depth and quality. This gives you a sense of how model capability varies.

Knowledge Check

1. What is a token in the context of AI?

a) A payment method for AI services

b) A small piece of text, roughly three-quarters of a word, that the model processes

c) A physical chip inside the AI computer

d) A security credential for logging in

Answer: b) A token is a fragment of text — roughly ¾ of a word on average — that serves as the basic unit the model processes.

2. What happens when a conversation exceeds the model's context window?

a) The model crashes

b) The model starts charging double

c) Earlier parts of the conversation may be dropped, and the model can no longer "see" them

d) The model automatically summarises the conversation

Answer: c) When the conversation exceeds the context window, earlier messages may fall outside what the model can process, effectively being "forgotten."

3. Why might you choose a smaller AI model for a simple task?

a) Smaller models are always more accurate

b) Smaller models are faster and cheaper, and may be perfectly adequate for simple tasks

c) Smaller models have larger context windows

d) Smaller models are newer and therefore better

Answer: b) Smaller models use fewer resources, making them faster and cheaper. For straightforward tasks, they can perform just as well as larger models.

Visual overview