How Large Language Models Process and Generate Text

Listen to this lesson

Lesson 2.1 — How Large Language Models Process and Generate Text

Estimated reading time: 8 minutes

What happens when you type a message to ChatGPT?

You open a chatbot, type "Write me a short email declining a meeting invitation," and within seconds you get a polished, professional email. It feels almost like texting a very capable colleague.

But what actually happened between your message and that response? Understanding this — even at a high level — changes how you use these tools. It helps you write better prompts, spot weaknesses, and set realistic expectations.

No maths required. We promise.

Step 1: Your text becomes numbers

Computers don't read words. They process numbers. So the first thing that happens when you send a message is tokenisation — your text gets broken into smaller pieces called tokens, and each token is converted into a number.

A token isn't always a whole word. Common words like "the" or "and" are usually one token. Longer or less common words get split into pieces. The word "unbelievable" might become three tokens: "un" + "believ" + "able". Numbers, punctuation, and spaces are tokens too.

Why does this matter? Because the model doesn't see your message as words with meaning. It sees a sequence of numbers representing text fragments. Everything that follows is mathematical operations on those numbers.

We'll explore tokens in more detail in Lesson 2.3. For now, just know: your words become numbers before anything else happens.

Step 2: The model looks for patterns

Once your message is tokenised, it passes through the model's neural network — billions of parameters that were set during training. These parameters encode patterns the model learned from its training data (essentially, a vast amount of text from the internet, books, articles, and other sources).

The model isn't searching a database for your question and retrieving a stored answer. There's no filing cabinet inside ChatGPT with pre-written responses. Instead, it's doing something more like this:

Given this input, and given everything I've learned about how language works, what text is most likely to come next?

It's probability and pattern matching on an enormous scale. The model has seen so many examples of professional emails, meeting declines, and polite language during training that it can construct a plausible response that fits the pattern of "polite meeting decline email."

Step 3: Text is generated one token at a time

Here's something that surprises most people: the model generates its response one token at a time, from left to right.

It doesn't plan the entire email and then write it out. It produces the first token, then asks "given everything so far, what's the most likely next token?" It produces that token, and asks again. And again. And again. Hundreds or thousands of times until the response is complete.

This is why you sometimes see chatbot responses appearing word by word on screen — that's not a visual effect. It's the model literally generating each piece in sequence.

Think of it like autocomplete on your phone, but far more sophisticated. Your phone predicts the next word based on the previous few words. An LLM predicts the next token based on your entire conversation, plus the response it has generated so far, informed by patterns from its training data.

Step 4: Randomness keeps things interesting

If the model always picked the single most likely next token, its responses would be predictable and repetitive. You'd get the same response to the same prompt every time.

To prevent this, there's a controlled amount of randomness in the selection process. The model identifies the most probable next tokens and then chooses from among them with a degree of variability. This is controlled by a setting called temperature:

- Low temperature = more predictable, more focused responses (good for factual tasks)

- High temperature = more creative, more varied responses (good for brainstorming or creative writing)

This is why you can send the same message to ChatGPT twice and get different responses each time. The underlying probabilities are the same, but the random element produces different paths through the possibilities.

Step 5: The response gets assembled

As tokens are generated one by one, they're converted back from numbers into readable text and streamed to you. The model also knows when to stop — it's learned the pattern of "this is where a response should end" — though you can also interrupt it or set length limits.

The entire process, from your input to the complete response, typically takes just seconds. This is despite the fact that the model may have processed billions of calculations. Modern hardware — specifically GPUs (graphics processing units) and TPUs (tensor processing units) — makes this speed possible.

What's NOT happening

Understanding what the model isn't doing is just as important:

It's not searching the internet (unless specifically given that tool). The model's knowledge comes from its training data, not from live web access. When it gives you information, it's drawing from patterns learned during training, not looking things up.

It's not retrieving stored answers. There's no database of questions and answers inside the model. Every response is generated fresh, based on statistical patterns.

It's not understanding your meaning. It's processing the statistical relationships between tokens. When it "understands" your request for a professional email, it's really recognising patterns that match "professional email" in its training data and generating text that fits those patterns.

It's not reasoning from first principles. When the model appears to reason through a problem step by step, it's generating text that looks like step-by-step reasoning because it's seen many examples of that pattern in training data. Whether there's genuine reasoning happening underneath is one of the biggest open debates in AI — but the safe practical assumption is: there isn't.

Why this matters for using AI well

Understanding this process gives you practical advantages:

You'll write better prompts. Knowing that the model predicts the most likely continuation helps you understand why providing clear context and specific instructions produces better results. You're essentially steering the probability distribution toward the kind of output you want.

You'll know when to trust the output. If the model is generating text based on patterns, it will be most reliable when dealing with common, well-represented topics and least reliable with obscure or recent information.

You'll understand its limitations. The model can't truly reason, can't access information beyond its training data (unless given tools), and generates text one token at a time without a grand plan. These aren't bugs — they're features of the architecture. Working with them rather than against them is the key to effective AI use.

You'll stop being mystified. Knowing what's happening under the hood — even at this high level — removes the mystique. AI is impressive engineering, not magic. And impressive engineering can be understood, used effectively, and improved upon.

Key Takeaways

- Your text is broken into tokens (small pieces) and converted to numbers before the model processes it

- The model predicts the most likely next token based on patterns from its training data — one token at a time

- Controlled randomness (temperature) makes responses varied rather than identical every time

- The model doesn't search, retrieve, understand, or reason — it generates statistically likely text continuations

- Understanding this process helps you write better prompts and set realistic expectations

Practical Exercise

Watch the generation process in action.

Open an AI chatbot (ChatGPT, Claude, or another) and try the following:

-

Ask it to "Write a 200-word paragraph about Wellington's waterfront." Watch the response appear — notice how it streams word by word? That's token-by-token generation in real time.

-

Send the exact same prompt again. Compare the two responses. They should be similar in topic but different in specific wording — that's the temperature randomness at work.

-

Now try a more specific prompt: "Write a 200-word paragraph about Wellington's waterfront, focusing on the wind, and include the phrase 'horizontal rain'." Notice how the added detail steers the output in a particular direction — you're guiding the probability distribution.

Write a short note on what you observed. How did the responses differ between the vague and specific prompts? What does this tell you about how context affects the model's predictions?

Knowledge Check

1. How does a large language model generate its response?

a) By searching a database of pre-written answers

b) By predicting the most likely next token, one at a time, based on patterns from training data

c) By copying and pasting from websites

d) By understanding the question and reasoning through the answer

Answer: b) LLMs generate text one token at a time, predicting the most likely next token based on statistical patterns learned during training.

2. What is "temperature" in the context of AI text generation?

a) The physical heat generated by the computer

b) A setting that controls how much randomness is in the token selection process

c) A measure of how fast the AI generates text

d) The emotional tone of the AI's response

Answer: b) Temperature controls the randomness of token selection — low temperature means more predictable responses, high temperature means more creative and varied ones.

3. Why might you get a different response when sending the same prompt to an AI twice?

a) The AI has changed its mind

b) The AI searches different websites each time

c) Controlled randomness in the token selection means different probable tokens get chosen

d) Someone at the AI company manually rewrites responses

Answer: c) The temperature setting introduces controlled randomness, so the model may choose different tokens from the pool of probable options each time.

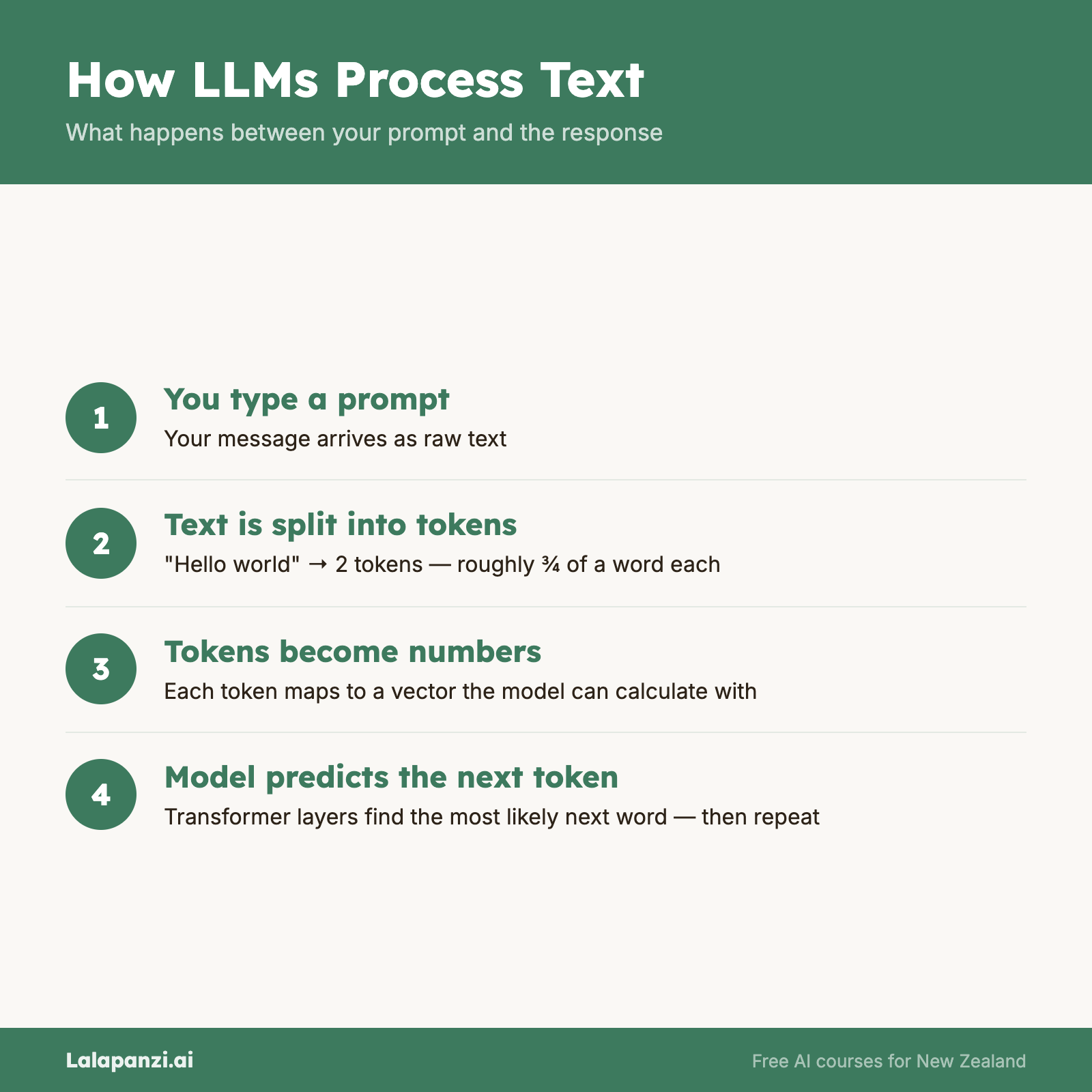

Visual overview