Why AI Hallucinates and What to Do About It

Listen to this lesson

Lesson 2.4 — Why AI Hallucinates and What to Do About It

Estimated reading time: 8 minutes

The confidence problem

Here's something unsettling about AI: it can be completely wrong and sound completely certain about it. It won't hedge. It won't say "I made that up." It will state a fabricated fact, a non-existent citation, or an invented statistic with the same smooth confidence it uses for things that are true.

This is called hallucination, and it's not a bug that's about to be fixed. It's a fundamental feature of how these systems work. Understanding why it happens — and what to do about it — is one of the most practically important things in this entire course.

What is hallucination?

AI hallucination is when a model generates information that sounds plausible but is factually incorrect, made up, or nonsensical.

Examples include:

- Invented facts — "New Zealand became a republic in 2019" (it didn't)

- Fake citations — providing academic paper titles that don't exist, complete with plausible-sounding authors and journals

- Fabricated statistics — "73% of New Zealand businesses adopted AI in 2024" (a made-up number)

- Confident errors — getting dates, names, or details wrong while presenting them as certain

- Plausible nonsense — generating explanations that sound logical but are fundamentally incorrect

The term "hallucination" isn't perfect — it implies the model is perceiving something that isn't there, which anthropomorphises it. The model isn't seeing things. It's generating statistically plausible text that happens to be false. But the term has stuck, and it's widely understood.

Why it happens

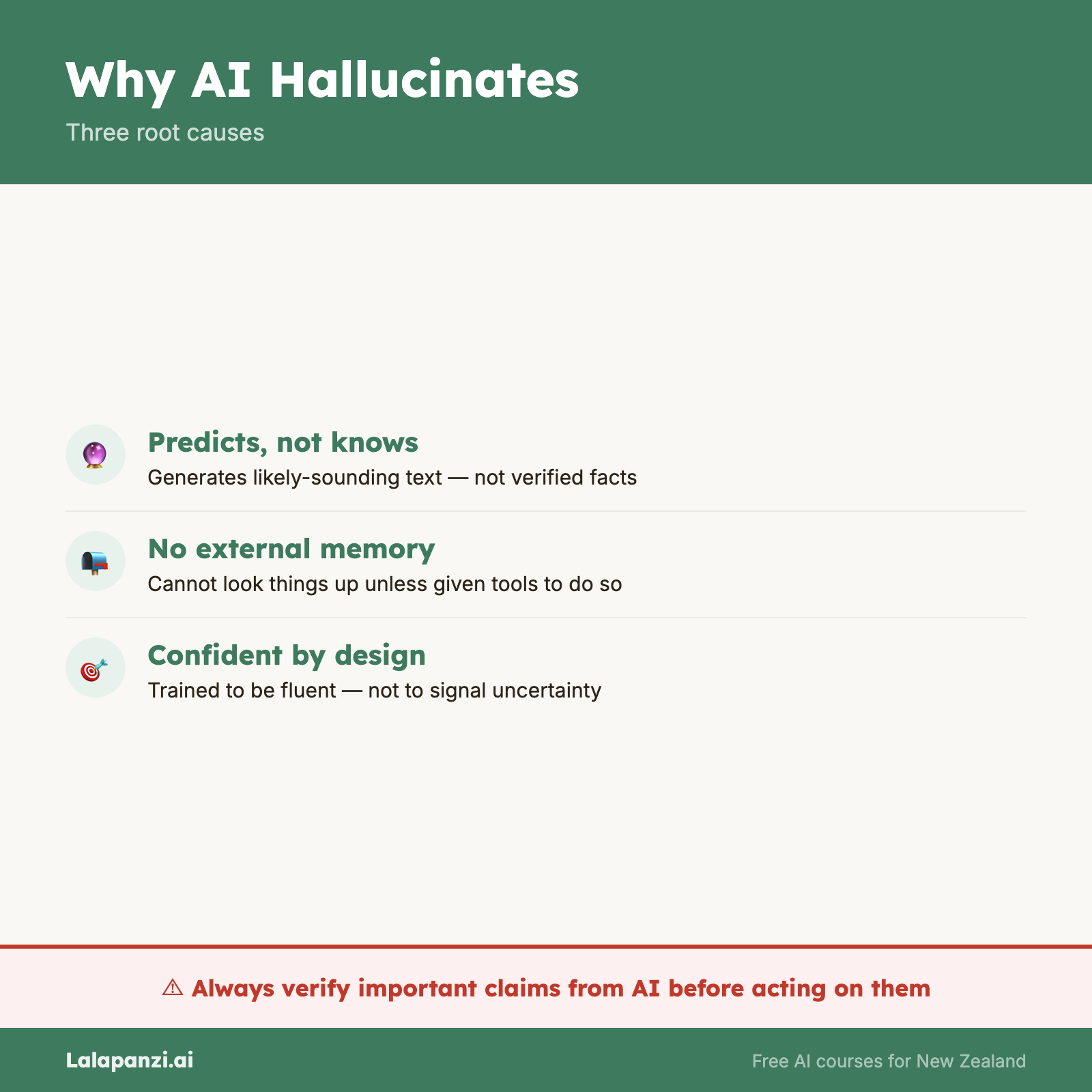

Hallucination isn't a glitch. It's a natural consequence of how language models work. Three factors drive it:

1. The model generates probable text, not true text

As we covered in Lesson 2.1, LLMs predict the most likely next token based on patterns in their training data. "Likely" and "true" are not the same thing. A sequence of words can be highly probable — fitting perfectly into the statistical patterns the model has learned — while being completely false.

If you ask for a citation on a specific topic, the model knows what citations look like: an author name, a year, a journal title, an article title. It can generate all of those elements with perfect formatting. But it's generating them from patterns, not looking them up. The result looks exactly like a real citation — because structurally, it is — but the specific paper may never have existed.

2. The model can't say "I don't know" naturally

Humans recognise the boundaries of their knowledge. You know when you're guessing versus when you're certain. LLMs don't have this self-awareness. They don't have an internal signal that says "I'm uncertain about this — I should say so."

When an LLM encounters a question it doesn't have good training data for, it doesn't fall silent or flag uncertainty. It generates the most likely completion, which often means producing a confident-sounding response based on whatever tangential patterns it can find.

Training techniques like RLHF (Lesson 2.2) have improved this — modern models are more likely to say "I'm not sure" than earlier ones — but the underlying tendency to generate confident text regardless of accuracy remains.

3. No fact-checking mechanism

There's no built-in verification step. The model doesn't generate a response and then check it against a database of facts before sending it to you. It produces text and delivers it. Full stop.

Some systems are now adding retrieval mechanisms (searching the web or a knowledge base before responding), which helps significantly. But the base model itself has no concept of "let me verify this first."

When hallucination is most likely

Not all queries are equally prone to hallucination. It's useful to know where the risk is highest:

Higher risk:

- Specific facts: dates, numbers, statistics, names

- Citations and references

- Recent events (after the training cutoff)

- Niche topics with little training data

- Questions about specific people, especially non-famous individuals

- Legal, medical, or financial specifics

- Claims about specific NZ regulations or policies

Lower risk:

- General explanations of well-known concepts

- Common knowledge widely represented in training data

- Creative writing and brainstorming (where "correctness" isn't the point)

- Structural tasks: formatting, organising, summarising provided text

- Programming in popular languages with common patterns

What to do about it

Hallucination isn't going away completely. But there's a lot you can do to manage it:

1. Verify anything that matters

This is the golden rule. If the information will be used in a decision, shared publicly, or referenced professionally, check it independently. Don't trust an AI-generated statistic without finding the source. Don't use an AI-generated citation without confirming the paper exists.

2. Ask for sources — then check them

You can ask AI to provide sources for its claims. This is useful, but with a critical caveat: the sources themselves might be hallucinated. Always follow the link or search for the reference independently. A real source that backs up the claim is valuable. A fabricated source is worse than no source at all.

3. Provide the facts yourself

One of the most effective strategies is to give the model the information you want it to work with, rather than asking it to provide information. Instead of "What are the key statistics on AI adoption in NZ?", try "Here are three statistics on AI adoption in NZ: [paste your data]. Write a paragraph incorporating these."

When you supply the facts, the model's job becomes language and structure — tasks it's genuinely good at — rather than fact recall, which is where it stumbles.

4. Use specific, constrained prompts

Vague prompts invite hallucination. "Tell me about the history of Auckland" gives the model enormous space to fill, increasing the chance of fabricated details. "Summarise the following text about Auckland's founding in 1840" constrains the task to material you've provided.

5. Watch for the warning signs

With practice, you'll develop an instinct for suspicious outputs:

- Suspiciously round numbers ("exactly 75% of...")

- Overly specific details that seem too perfect

- Citations you've never heard of on well-covered topics

- Confident claims about recent events

- Information that seems to perfectly support the argument (too convenient)

6. Use AI tools with retrieval

Some AI tools can search the web or access specific databases before responding. These retrieval-augmented systems are significantly less prone to hallucination on factual questions because they're working from actual sources rather than memory alone.

The right mental model

Think of AI as a brilliant, articulate colleague who has read extensively but has a peculiar condition: they can't distinguish between things they actually know and things they're constructing on the spot. They're not lying — they genuinely can't tell the difference. They're always doing their best.

You'd still value this colleague's help. Their writing would be excellent. Their brainstorming would be creative. Their summaries would be clear. But you'd always double-check their facts before putting them in a report.

That's the right relationship to have with AI.

Key Takeaways

- Hallucination is when AI generates plausible but incorrect information — it's a fundamental feature, not a temporary bug

- It happens because the model predicts probable text, not true text — and lacks the ability to verify its own output

- Factual claims, citations, statistics, and recent events are highest risk — always verify these

- Providing facts to the model (rather than asking it to supply them) dramatically reduces hallucination

- Treat AI as a brilliant colleague with a fact-checking problem — valuable, but verify before you trust

Practical Exercise

Find a hallucination.

This exercise has a specific goal: experience hallucination firsthand so you can recognise it in future.

-

Ask an AI chatbot a factual question on a topic you know well. Something specific enough that you can verify the answer. For example:

- A specific fact about your profession

- A historical detail about your local area

- A specific claim about a NZ law or regulation you're familiar with

-

Ask it to provide citations or references for its answer.

-

Check every fact and every reference independently. Use Google, library databases, or your own knowledge.

-

Write down:

- What was accurate?

- What was incorrect?

- Were any citations fabricated?

- How confident did the AI sound about the incorrect information?

If everything was accurate — good! Try a more obscure or specific topic and repeat. The point is to experience the moment when confident-sounding AI text turns out to be wrong. Once you've felt that, you'll never fully trust AI output without checking again.

Knowledge Check

1. What is AI hallucination?

a) When an AI system has a visual malfunction

b) When an AI generates plausible-sounding information that is factually incorrect or fabricated

c) When an AI becomes confused and stops responding

d) When a user misreads an AI's response

Answer: b) Hallucination is when an AI produces text that sounds convincing but is factually wrong, made up, or nonsensical — and presents it with confidence.

2. Why do AI models hallucinate?

a) Because they are poorly made

b) Because they predict statistically probable text, which isn't always factually true, and lack a fact-checking mechanism

c) Because they deliberately try to mislead users

d) Because they run out of memory

Answer: b) LLMs generate the most likely text continuation based on patterns, not verified facts. Combined with no internal verification, this naturally produces hallucinations.

3. What is the most effective way to reduce hallucination when using AI?

a) Ask the AI to promise it won't hallucinate

b) Only use AI for non-factual tasks

c) Provide the facts yourself and have the AI work with the information you supply

d) Use the most expensive AI model available

Answer: c) When you supply the facts, the AI's task becomes language and structure rather than fact recall — playing to its strengths while avoiding its biggest weakness.

Visual overview