Brief History: From Concept to ChatGPT

Listen to this lesson

Lesson 1.2 — Brief History: From Concept to ChatGPT

Estimated reading time: 8 minutes

Why history matters

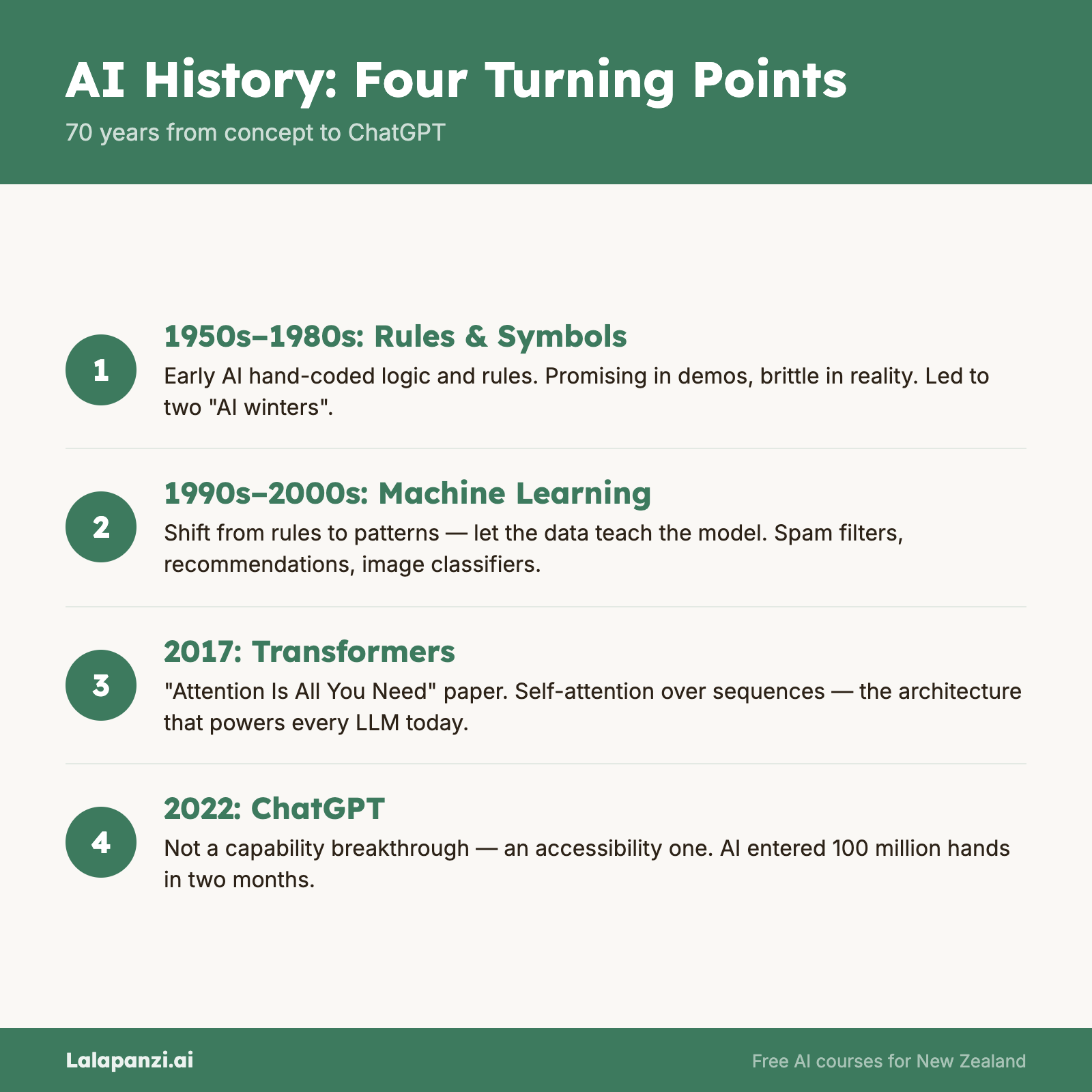

You don't need to memorise dates to use AI well. But understanding where this technology came from helps you see the current moment clearly. AI isn't a sudden invention — it's the result of seventy years of work, false starts, breakthroughs, and a few lucky timing coincidences.

Knowing the history also helps you spot hype. We've been here before — twice — and understanding those earlier waves of excitement (and disappointment) gives you a healthy perspective on today's promises.

The idea: 1940s–1950s

The story starts with a question: Can machines think?

In 1950, British mathematician Alan Turing published a paper asking exactly that. He proposed what we now call the Turing Test — if a human can't tell whether they're chatting with a machine or a person, the machine can be considered intelligent. It's a surprisingly useful thought experiment, and people still argue about it today.

In 1956, a group of researchers at Dartmouth College in the United States held a summer workshop and coined the term "artificial intelligence." They were optimistic. Wildly optimistic, as it turned out. They thought machines might match human intelligence within a generation.

They were wrong by about seven decades (and counting). But they started something real.

Early promise: 1960s–1970s

The first AI programmes were impressive for their time. Computers could play chess (badly), solve algebra problems, and even hold basic conversations. A programme called ELIZA, created in 1966, could mimic a therapist's responses by reflecting your own words back at you. People found it surprisingly convincing — a very early hint of what was to come.

Governments poured money into AI research. The future seemed bright.

Then reality hit.

The first AI winter: late 1970s–1980s

Researchers realised the problems were vastly harder than anyone expected. Getting a computer to recognise a face, understand a sentence, or navigate a room turned out to be extraordinarily difficult. The ambitious promises went unfulfilled, funding dried up, and interest faded.

This period is called the first AI winter — a time when AI research went quiet and the term itself became almost embarrassing in academic circles.

Expert systems and the second wave: 1980s

AI bounced back in the 1980s with expert systems — programmes that encoded human expertise as rules. A medical expert system might contain thousands of if-then rules: "If the patient has a fever AND a rash AND recent travel to a tropical region, THEN consider malaria."

These were genuinely useful in specific domains. Companies invested heavily. AI was hot again.

And then, again, the limitations became clear. Expert systems were brittle, expensive to build, and couldn't handle anything outside their narrow rule set. Funding dropped. The second AI winter arrived in the late 1980s and early 1990s.

The quiet revolution: 1990s–2000s

Here's where it gets interesting, because the most important AI developments happened while nobody was calling them AI.

Machine learning — the idea that systems could learn from data rather than following hand-coded rules — started producing real results. Google's search engine used machine learning to rank results. Amazon used it to recommend products. Spam filters used it to catch junk email.

These didn't feel like "artificial intelligence" to most people. They felt like features. But underneath, the fundamental approach had shifted from writing rules to learning from data. This turned out to be the key that opened up everything that followed.

Meanwhile, computing power kept increasing, and the internet was generating vast quantities of data for systems to learn from. Two critical ingredients were falling into place.

Deep learning takes off: 2010s

In 2012, a system called AlexNet won an image recognition competition by a huge margin, using an approach called deep learning — essentially, machine learning using large neural networks with many layers. This result shocked the field and kicked off a gold rush.

Suddenly, deep learning was solving problems that had resisted decades of effort:

- Image recognition went from unreliable to superhuman accuracy

- Speech recognition became good enough for Siri and Alexa

- Translation improved dramatically (Google Translate got noticeably better around 2016)

- Game-playing AI — in 2016, Google's AlphaGo defeated the world champion at Go, a game long considered too complex for computers

Tech companies hired every AI researcher they could find. GPUs (graphics processing units, originally built for video games) turned out to be perfect for training neural networks. The infrastructure was coming together.

The transformer moment: 2017

In 2017, researchers at Google published a paper called "Attention Is All You Need." It introduced a new architecture called the transformer, which processed language far more effectively than previous approaches.

This paper — technical, understated, and only eleven pages long — turned out to be one of the most consequential publications in the history of computing. The transformer architecture is the foundation of ChatGPT, Claude, Gemini, and virtually every large language model in use today.

The name "GPT" literally stands for Generative Pre-trained Transformer.

The ChatGPT moment: 2022

OpenAI had been building increasingly capable language models — GPT-1 (2018), GPT-2 (2019), GPT-3 (2020). These were impressive but mostly used by developers and researchers.

Then, on 30 November 2022, OpenAI released ChatGPT — a free chatbot interface that anyone could use. You typed a question in plain English and got a coherent, often useful response.

It reached 100 million users in two months. For context, Instagram took two and a half years to hit the same number.

ChatGPT didn't represent a sudden breakthrough in AI capability — GPT-3 had been available for two years. What it represented was a breakthrough in accessibility. For the first time, anyone with an internet connection could interact with a powerful AI system using everyday language.

Where we are now: 2024–2026

Since ChatGPT's launch, the pace has been relentless:

- GPT-4 (2023) brought significant improvements in reasoning and accuracy

- Claude (Anthropic) and Gemini (Google) emerged as strong alternatives

- Open-source models made AI accessible to developers worldwide

- Multimodal AI can now process text, images, audio, and video

- AI agents are actively performing multi-step tasks — writing code, browsing the web, and managing workflows autonomously

- Businesses across every sector — including here in Aotearoa — are integrating AI into daily operations

We're in what might be called the third wave of AI, and unlike the previous two, this one is backed by real, working products that millions of people use every day. That doesn't mean the hype is entirely justified — there's still plenty of overselling happening. But the underlying technology is genuinely useful in a way it wasn't before.

The pattern to notice

Every wave of AI has followed the same pattern: breakthrough → hype → over-promise → disappointment → quiet progress → the next breakthrough.

Understanding this cycle is useful. It means you can appreciate what AI does well today without assuming it will do everything tomorrow. The technology is real and valuable. It's also not magic, and it has limits.

That's the honest picture. Let's keep building on it.

Key Takeaways

- AI has a 70-year history — it's not a sudden invention but the result of decades of research, setbacks, and breakthroughs

- Two "AI winters" happened when promises outpaced reality — a useful reminder to stay grounded

- The shift from rules to learning from data (machine learning) was the critical turning point

- The transformer architecture (2017) is the foundation of today's large language models

- ChatGPT's breakthrough was accessibility, not capability — it made existing AI technology available to everyone

Practical Exercise

Create your own AI timeline.

Using what you've learned in this lesson (and a quick web search if you'd like), create a simple timeline of AI milestones that matter to you personally. Include:

- At least 5 events from the lesson

- The first time you personally used something that was AI-powered (even if you didn't know it at the time — think spam filters, voice assistants, recommendations)

- The first time you tried a tool like ChatGPT or another AI chatbot

Write a short paragraph reflecting on this question: Were you using AI before you realised you were? Most people were — and that's an important thing to notice.

Knowledge Check

1. What is an "AI winter"?

a) A season when AI works less effectively due to cold weather

b) A period when AI research funding and interest declined after overhyped promises

c) A cooling system required to run AI hardware

d) A type of AI designed for weather prediction

Answer: b) AI winters were periods when excitement faded because the technology couldn't deliver on its promises, leading to reduced funding and interest.

2. What was the key significance of ChatGPT's launch in November 2022?

a) It was the first AI system ever created

b) It was the first AI to pass the Turing Test

c) It made powerful AI accessible to anyone through a simple chat interface

d) It replaced all other AI systems

Answer: c) ChatGPT's breakthrough was accessibility — it let anyone interact with a large language model using everyday language, reaching 100 million users in two months.

3. What does GPT stand for?

a) General Purpose Technology

b) Generative Pre-trained Transformer

c) Global Processing Tool

d) Generated Predictive Text

Answer: b) GPT stands for Generative Pre-trained Transformer — referencing the transformer architecture introduced in 2017.

Visual overview