Training Data: Where It Comes From, Why It Matters

Listen to this lesson

Lesson 2.2 — Training Data: Where It Comes From, Why It Matters

Estimated reading time: 8 minutes

The fuel that makes AI go

An AI model is only as good as the data it was trained on. This isn't a throwaway line — it's arguably the most important thing to understand about how AI works in practice. Training data shapes everything: what the model knows, what it gets wrong, whose perspectives it reflects, and whose it ignores.

If you understand training data, you understand most of AI's strengths and weaknesses. So let's dig in.

What is training data?

Training data is the information that an AI system learns from. For large language models like ChatGPT and Claude, the training data is text — vast, almost incomprehensible amounts of text.

We're talking about:

- Web pages — billions of them, crawled and archived

- Books — fiction and non-fiction, covering every topic imaginable

- Wikipedia — a popular training source because it's well-structured and covers enormous breadth

- Academic papers — research across every discipline

- News articles — from publications around the world

- Forums and discussions — Reddit, Stack Overflow, and similar platforms

- Code repositories — GitHub and other sources of programming code

- Government and legal documents — publicly available records

The exact composition varies by model, and most AI companies don't fully disclose what's in their training data. But the general picture is consistent: LLMs are trained on a very large portion of the publicly available text on the internet, supplemented with books and other text sources.

To put the scale in perspective: GPT-3 was trained on roughly 570 GB of text data — that's about 300 billion words. More recent models use significantly more. If you read 24 hours a day, it would take you thousands of years to read what these models trained on in weeks.

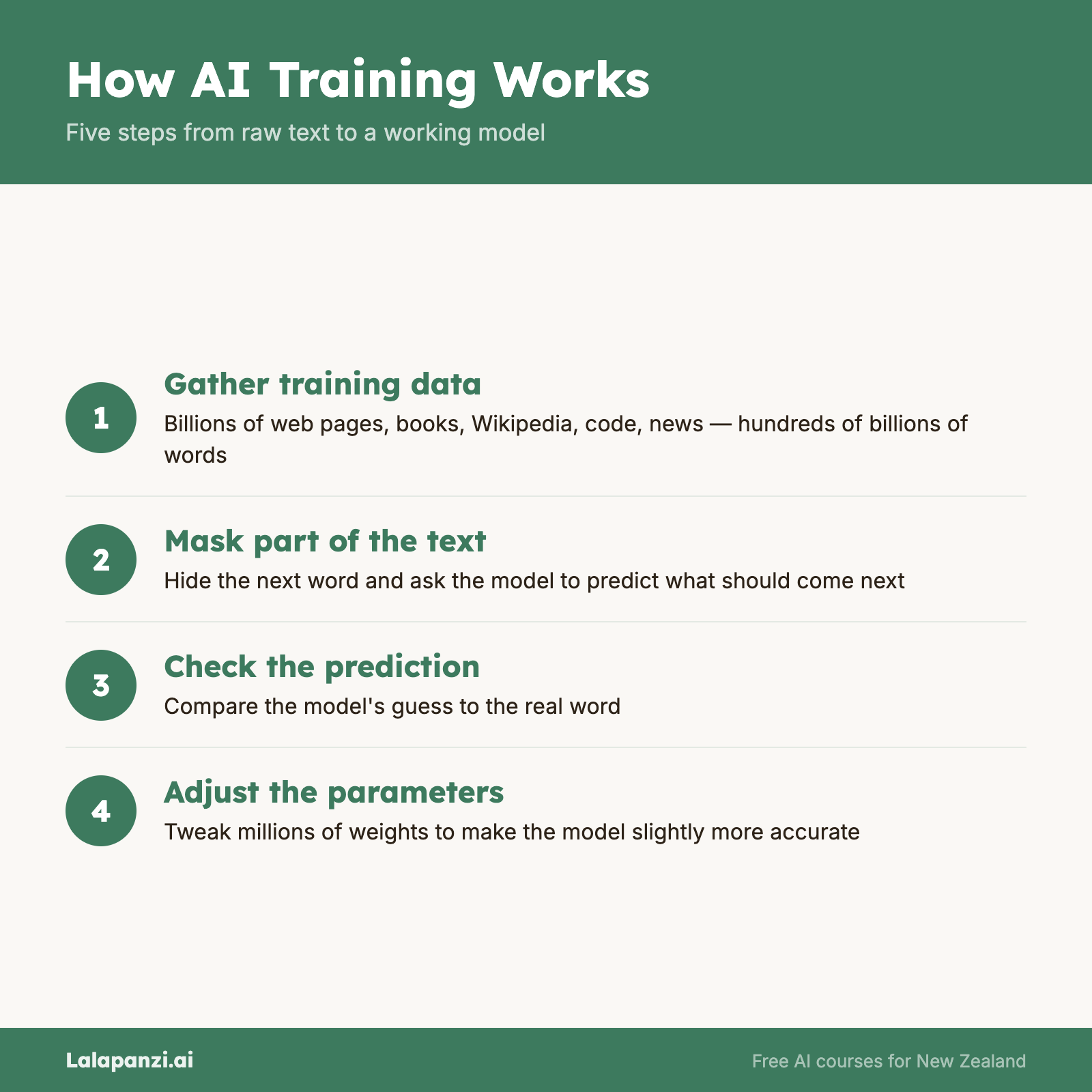

How training works (simplified)

The training process, stripped to its essence, works like this:

- Show the model a huge amount of text

- Mask part of it — hide the next word (or words) and ask the model to predict what comes next

- Check if it was right — compare the prediction to the actual text

- Adjust — tweak the model's parameters to make it slightly better at predicting next time

- Repeat — billions of times, across the entire training dataset

Through this process, the model learns the statistical patterns of language: which words tend to follow which other words, how sentences are structured, what kinds of responses follow what kinds of questions, how different topics are typically discussed.

It's important to note that the model doesn't store the training data itself. You can't ask ChatGPT to recite a specific book (though it might remember fragments). What it stores is the patterns — compressed, abstracted, and distributed across its parameters.

Why training data matters so much

It determines what the model knows

If something wasn't well-represented in the training data, the model won't handle it well. This has practical consequences:

- English dominates — LLMs perform much better in English than in te reo Māori, for example, because there's vastly more English text in the training data

- Popular topics are better covered — ask about common programming languages and you'll get excellent help; ask about niche NZ regulatory frameworks and the quality drops

- Recent events are missing — training data has a cutoff date, so the model doesn't know about things that happened after training was complete

It determines the model's biases

Training data reflects the internet, and the internet reflects human society — including its biases. If the training data contains more text by and about certain groups, the model will be more fluent and nuanced when discussing those groups and less so for others.

Documented biases in LLMs include:

- Gender stereotypes — associating certain professions with specific genders

- Cultural bias — defaulting to American or Western perspectives on topics

- Representation gaps — less nuanced handling of minority viewpoints, indigenous knowledge, or non-Western cultural contexts

- Language quality variation — performing better in well-resourced languages and worse in under-resourced ones

This isn't a failure of the technology per se — it's a reflection of what was in the training data. But the practical effect is the same: AI output can perpetuate biases, and you need to watch for this.

It raises questions about consent and copyright

Much of the text used to train AI models was scraped from the internet without explicit permission from the authors. This has created significant legal and ethical debates:

- Authors and publishers argue their copyrighted works were used without consent or compensation

- Visual artists have raised similar concerns about image-generation models trained on their art

- News organisations are suing AI companies for using their articles in training data

- Open-source contributors question whether their code should train commercial products

As of early 2026, these legal questions are still being resolved in courts around the world. The NZ legal framework hasn't specifically addressed AI training data yet, but it's likely to in the coming years.

For you as a user, the practical point is this: the AI's knowledge came from somewhere, and the ethics of that process are genuinely complicated. It's worth being aware of.

The training pipeline

Training a modern LLM typically involves several stages:

1. Pre-training — The model learns language patterns from the massive text dataset. This is the most expensive stage, costing millions of dollars in computing.

2. Fine-tuning — The pre-trained model is further trained on a more curated, higher-quality dataset to improve its performance on specific tasks (like following instructions or having conversations).

3. RLHF (Reinforcement Learning from Human Feedback) — Human reviewers rate the model's responses, and the model is adjusted to produce responses that humans find more helpful, accurate, and appropriate. This is what makes modern chatbots feel helpful and polite rather than raw and erratic.

Each stage shapes the final product. Pre-training gives the model knowledge. Fine-tuning gives it focus. RLHF gives it manners.

What this means for you

1. Check the model's knowledge boundaries. If you're asking about something niche, recent, or from a non-English context, be extra cautious about the response. The model may have limited training data on that topic.

2. Watch for cultural defaults. If you ask for advice on employment law without specifying New Zealand, you'll likely get US-centric information. Always provide context, especially for location-specific questions.

3. Be aware of bias. If you're using AI to draft job descriptions, evaluate applications, or create content about people, review the output for unintentional bias. The model's defaults may not reflect your values.

4. Understand that knowledge is frozen. The model's base knowledge has a cutoff date. For current information, you need a model with web search capability, or you need to verify independently.

Key Takeaways

- Training data is the text that AI models learn from — typically billions of words from the internet, books, and other sources

- The model learns patterns, not facts — it doesn't store the data itself but encodes statistical patterns across its parameters

- Training data determines what the model knows, doesn't know, and gets wrong — including biases

- The training pipeline has stages: pre-training (broad knowledge), fine-tuning (focused skills), and RLHF (helpful behaviour)

- Always provide context — especially for NZ-specific or niche topics where training data may be sparse

Practical Exercise

Test the training data boundaries.

Use an AI chatbot to explore where its knowledge is strong and where it's thin. Try these prompts and rate the quality of each response (1 = poor/wrong, 5 = excellent):

- "Explain the offside rule in football" (well-represented topic)

- "What are the main differences between ACC and private health insurance in New Zealand?" (NZ-specific)

- "Explain the concept of kaitiakitanga in the context of environmental management" (te ao Māori)

- "What happened in the news yesterday?" (recency test)

- "Summarise the plot of the most popular novel published last month" (recency + specificity)

For each response, consider:

- How confident did the AI sound?

- Was the information accurate? (Check if you can)

- Where did it seem to lack depth?

Write a short reflection: What does this tell you about the training data behind this model?

Knowledge Check

1. What is training data for a large language model?

a) A small, hand-curated set of perfect answers

b) A vast collection of text that the model learns language patterns from

c) Live data streamed from the internet in real time

d) A database of questions and correct responses

Answer: b) Training data is a massive collection of text — web pages, books, articles, code — from which the model learns the statistical patterns of language.

2. Why might an AI model give better answers about US law than NZ law?

a) Because US law is simpler

b) Because the model prefers American content

c) Because there is far more US legal text in the training data than NZ legal text

d) Because NZ law isn't available on the internet

Answer: c) Training data is disproportionately English-language and US-centric. Topics with more training data are handled with more depth and accuracy.

3. What is RLHF (Reinforcement Learning from Human Feedback)?

a) A process where humans rate AI responses, and the model is adjusted to produce better ones

b) A system where AI learns by watching humans use computers

c) A method for humans to learn from AI feedback

d) A technique for making AI run faster

Answer: a) RLHF involves human reviewers evaluating model outputs, with the model being adjusted to produce responses that humans rate as more helpful, accurate, and appropriate.

Visual overview