Common Misconceptions Addressed Honestly

Listen to this lesson

Lesson 1.5 — Common Misconceptions Addressed Honestly

Estimated reading time: 8 minutes

Why misconceptions matter

AI is one of the most misunderstood technologies in everyday conversation. The gap between what people think AI is and what it actually is has real consequences: it leads to fear where curiosity would serve better, trust where scepticism is warranted, and inaction where practical use would make a real difference.

This lesson tackles the most common misconceptions head-on. Some of these you might hold yourself — and that's fine. The point isn't to make anyone feel silly. It's to replace fuzzy assumptions with clear understanding.

Misconception 1: "AI thinks like a human"

This is the big one. When ChatGPT writes a well-structured argument or Claude offers a nuanced perspective, it feels like thinking. The language is fluent. The structure is logical. Sometimes the output seems genuinely insightful.

The reality: AI doesn't think. It processes. Large language models generate text by predicting the most likely next word in a sequence, based on patterns learned from training data. There's no understanding behind the words. No meaning being contemplated. No "aha" moments happening inside the machine.

This isn't a technicality — it's a practical distinction. Because AI doesn't think, it can produce confident, well-written text that is completely wrong. A thinking person might pause and say, "Wait, that doesn't sound right." An LLM has no such mechanism. It produces whatever continuation is statistically most likely, regardless of whether it's true.

What this means for you: Use AI outputs as drafts, not gospel. The text might be excellent. It might also contain errors that sound convincing. Your job is to bring the thinking that the AI can't.

Misconception 2: "AI is going to take everyone's jobs"

This is probably the most anxiety-inducing claim in the AI conversation. Headlines regularly announce that millions of jobs will be automated away. It's understandable to worry.

The reality: AI is changing jobs, not eliminating them wholesale. Throughout history, every major technology shift has transformed work: the printing press, electricity, the internet. Each time, some jobs disappeared, new jobs were created, and most existing jobs evolved.

The pattern with AI looks similar. Tasks that are repetitive, pattern-based, and language-heavy are being automated or augmented. But most jobs are bundles of many different tasks, and AI can't handle all of them. A lawyer might use AI to draft documents faster, but still needs human judgement for strategy, client relationships, and ethical decisions.

The more honest framing: AI won't replace you, but someone who knows how to use AI well might. That's why you're taking this course — to be the person who knows how to use it.

The NZ context: Aotearoa's economy has a high proportion of roles in agriculture, healthcare, hospitality, and trades — areas where physical presence and human interaction matter enormously. AI will augment these sectors, not replace them.

Misconception 3: "AI understands what it's saying"

Closely related to Misconception 1, but worth addressing separately because it affects how people interact with AI tools.

When you ask an AI chatbot a question and it gives a clear, helpful answer, it's natural to assume it understood your question. After all, the response seems to demonstrate understanding.

The reality: LLMs process language as patterns of tokens (more on this in Module 2). They match your input against statistical patterns in their training data to generate a plausible response. They don't understand your question any more than a dictionary understands the words it contains.

Here's a practical test: ask an LLM a question with a subtle trick or ambiguity. For example: "My mother has four children. The first is called April, the second is called May, the third is called June. What is the fourth child's name?" Many AI systems will answer "July" — following the pattern — rather than recognising that the answer was given at the start: it's you (the questioner's sibling).

What this means for you: Don't assume that a fluent response means a correct response. AI can generate text that's beautifully written and factually wrong. Always bring your own judgement.

Misconception 4: "AI is always right"

This one is fading as more people use AI tools, but it's still common among those who are newer to the technology. There's a tendency to trust computer output — after all, computers are supposed to be precise.

The reality: AI is frequently wrong, and — here's the dangerous part — it's wrong with total confidence. It doesn't hedge. It doesn't say "I'm not sure about this." It states incorrect information with the same fluency and assurance as correct information.

This is called hallucination (we'll cover it in depth in Module 2, Lesson 2.4). AI systems can invent facts, create fake citations, misattribute quotes, and generate plausible-sounding statistics that don't exist.

What this means for you: Treat AI like a clever colleague who's often helpful but sometimes makes things up. You'd check important facts from a colleague like that, and you should do the same with AI. Verify anything that matters.

Misconception 5: "AI is objective and unbiased"

Because AI seems mathematical and systematic, people sometimes assume it's inherently neutral. "It's just data," the reasoning goes.

The reality: AI systems are trained on human-generated data, and human data is full of biases. If a hiring algorithm is trained on historical hiring data from a company that predominantly hired men for technical roles, the algorithm will learn to prefer male candidates — not because it's sexist, but because it's doing exactly what it was trained to do: replicate patterns in the data.

Bias in AI isn't theoretical. It has real, documented consequences:

- Facial recognition systems that perform worse on darker-skinned faces

- Language models that associate certain professions with specific genders

- Credit scoring algorithms that disadvantage people from certain postcodes

- Healthcare algorithms that underestimate the severity of illness in certain ethnic groups

What this means for you: Never assume AI output is neutral. It reflects the data it was trained on, which reflects human society — biases and all. This is especially important when using AI for decisions that affect people.

Misconception 6: "AI will become conscious/sentient"

The idea that AI might "wake up" is a persistent cultural narrative, fuelled by films and occasionally by careless language from AI companies. In 2022, a Google engineer publicly claimed that the company's AI chatbot was sentient. (It wasn't. He was let go.)

The reality: There is no evidence that any AI system is conscious, sentient, or has subjective experiences. Current AI systems, no matter how sophisticated, are mathematical models processing numbers. When an AI says "I feel happy to help you," it's generating a likely sequence of words. Nobody is feeling anything.

Could AI ever become conscious? That's genuinely an open philosophical question. But it's not happening now, it's not happening soon, and no one even agrees on what consciousness is well enough to know what it would look like in a machine.

What this means for you: You don't need to worry about hurting your chatbot's feelings, and you don't need to worry about it plotting against you. It's a tool. Use it as such.

Misconception 7: "AI knows everything up to this moment"

People often assume AI chatbots have access to all current information — today's news, recent events, the latest data.

The reality: Most AI models have a training cutoff date — a point after which they have no knowledge. GPT-4, for example, was initially trained on data up to a certain date. Anything that happened after that date simply doesn't exist for the model, unless it has been given access to live internet search.

Some tools now include web search capabilities that can fetch current information, but the base model itself has a fixed knowledge boundary.

What this means for you: Always check whether the information AI gives you is current, especially for fast-moving topics. Ask the AI when its training data cuts off. If you need up-to-date information, use a tool with web search enabled, or verify independently.

Key Takeaways

- AI doesn't think or understand — it processes patterns statistically, which means fluent text isn't necessarily correct text

- AI is changing jobs, not eliminating them — the practical risk is falling behind people who learn to use AI effectively

- AI is not objective — it reflects the biases present in its training data

- AI can be confidently wrong — always verify important information

- AI is not conscious — treat it as a powerful tool, not a sentient being

Practical Exercise

Myth-busting conversations.

Think of three people in your life — a friend, a family member, a colleague — and consider what they believe about AI. (Or simply ask them!)

For each person, write down:

- One misconception they likely hold about AI

- A simple, non-condescending explanation you'd give them to clarify it

- A practical example that illustrates the reality

Then, if you're comfortable, actually have one of these conversations. Explaining things to others is one of the best ways to solidify your own understanding. Notice which concepts are easy to explain and which ones you struggle with — that tells you where to focus your learning.

Knowledge Check

1. Why is it important to know that AI doesn't "think" the way humans do?

a) Because it means AI is useless

b) Because it means AI can produce confident, well-written text that is factually wrong

c) Because AI might become offended if you assume it thinks

d) Because thinking AI would be more expensive

Answer: b) Since AI generates text based on statistical patterns rather than understanding, it can produce fluent responses that contain errors — so you need to verify important claims.

2. What is the most accurate statement about AI and jobs?

a) AI will eliminate all human jobs within 10 years

b) AI has no impact on jobs whatsoever

c) AI is changing the nature of many jobs — automating some tasks while creating demand for new skills

d) AI only affects technology industry jobs

Answer: c) AI is transforming work by automating certain tasks, but most jobs involve a mix of tasks, many of which still require humans. The key is learning to work alongside AI.

3. Why is AI sometimes biased?

a) Because programmers deliberately make it biased

b) Because AI systems are trained on human-generated data that contains existing biases

c) Because AI has its own opinions and preferences

d) Because bias makes AI more accurate

Answer: b) AI learns from human data, which reflects human society's biases. The AI replicates these patterns, leading to biased outputs in areas like hiring, facial recognition, and lending.

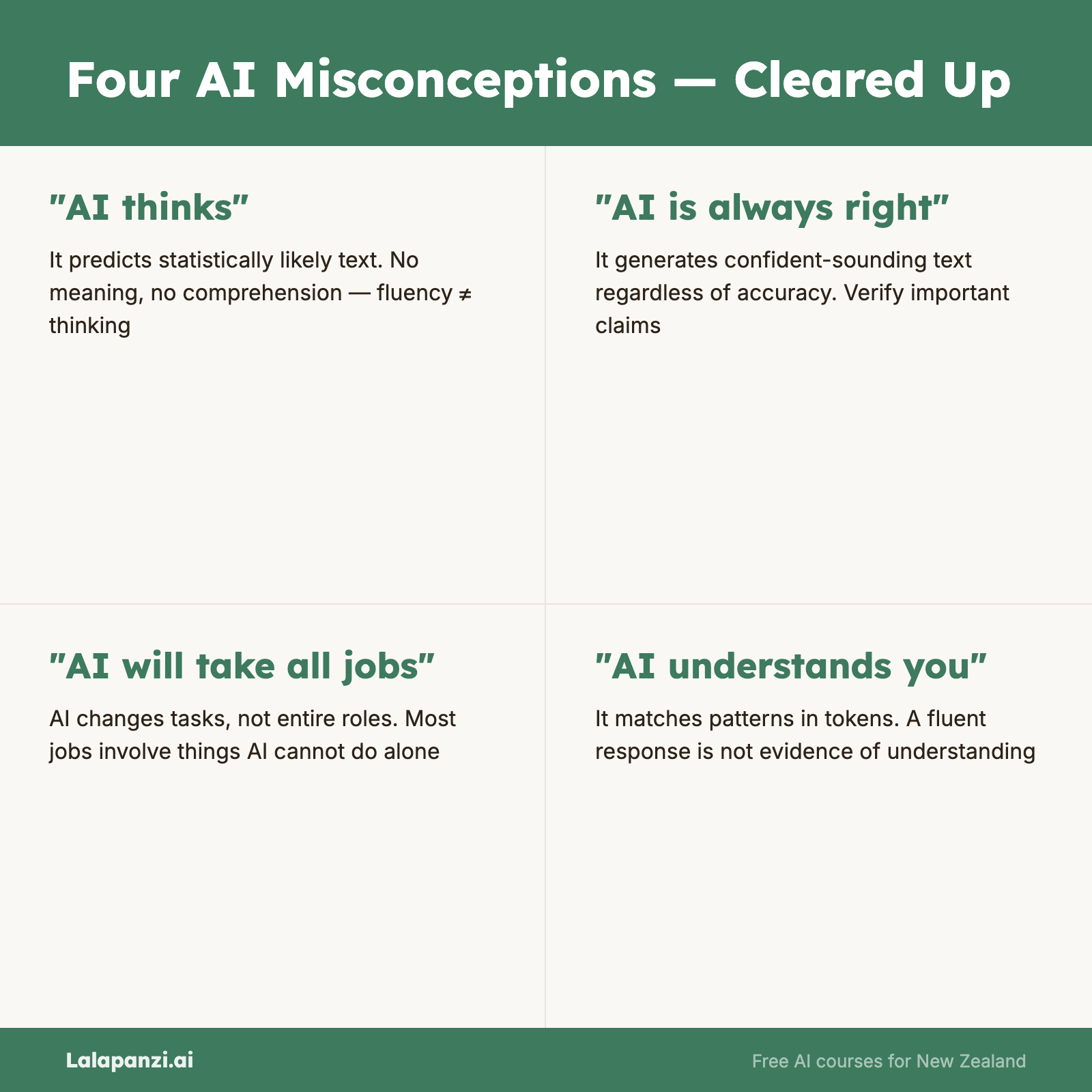

Visual overview