Key Terms Explained Simply (ML, Deep Learning, LLMs)

Listen to this lesson

Lesson 1.4 — Key Terms Explained Simply (ML, Deep Learning, LLMs)

Estimated reading time: 8 minutes

The jargon problem

AI has a terminology problem. Important concepts hide behind acronyms and technical language that makes them sound far more complicated than they actually are. This lesson translates the key terms into plain English so that when you encounter them in the wild — in news articles, product descriptions, or workplace conversations — you know what people are actually talking about.

We'll build from the ground up: each term builds on the one before it.

Artificial Intelligence (AI)

We covered this in Lesson 1.1, but as a quick anchor: AI is software that performs tasks which normally require human thinking. It's the broadest term — the big umbrella that everything else fits under.

Think of AI as the whole field. Everything below is a specific approach within that field.

Machine Learning (ML)

Machine learning is a way of creating AI by letting systems learn from data instead of programming them with explicit rules.

Here's the difference in practice:

Traditional programming: You tell the computer the rules. "If the email contains the words 'free money' and comes from an unknown sender, mark it as spam."

Machine learning: You show the computer thousands of examples and let it figure out the rules. "Here are 500,000 emails. These ones are spam. These ones aren't. Work out what makes them different."

The machine learning approach is more powerful because it can discover patterns that humans might never think to programme. A spam filter built with machine learning might pick up on subtle patterns — the formatting of the email, the sending time, combinations of words that individually seem innocent but together signal spam.

A real-world analogy: Teaching a child to recognise dogs. You don't sit them down with a rulebook ("four legs, fur, wet nose, tail"). You point at dogs: "That's a dog. That's a dog. That's not a dog, that's a cat." Eventually, they just know. Machine learning works similarly — except with millions of examples instead of dozens.

Most AI you encounter today is built using machine learning. When someone says "AI," they usually mean machine learning in practice.

Neural Networks

A neural network is a machine learning system loosely inspired by how biological brains are structured.

It's made up of layers of connected nodes (sometimes called "neurons," though they're nothing like actual brain cells). Data enters at one end, passes through the layers, gets transformed at each step, and produces an output at the other end.

Each connection between nodes has a "weight" — a number that determines how much influence one node has on the next. During training, these weights are adjusted millions of times until the network produces accurate outputs.

You don't need to understand the mechanics deeply. The key point is: a neural network is a specific structure used in machine learning that's particularly good at finding complex patterns in data — patterns that simpler approaches would miss.

Deep Learning

Deep learning is machine learning using neural networks with many layers — that's the "deep" part.

A simple neural network might have three layers. A deep learning network might have dozens, hundreds, or even thousands. More layers means the system can learn more complex and abstract patterns.

Deep learning is what enabled the breakthroughs of the 2010s:

- Image recognition — deep learning can look at a photo and tell you what's in it with remarkable accuracy

- Speech recognition — deep learning powers Siri, Alexa, and Google Assistant

- Language translation — the dramatic improvement in Google Translate around 2016 was driven by deep learning

- Game playing — AlphaGo used deep learning to master the ancient board game Go

The catch: Deep learning needs enormous amounts of data and computing power. You can't train a deep learning system on a laptop with a handful of examples. This is why big tech companies — with their vast data and massive computing infrastructure — dominate the field.

Large Language Models (LLMs)

A large language model is a deep learning system trained on vast amounts of text to understand and generate human language.

This is the technology behind ChatGPT, Claude, Gemini, and similar tools. Let's unpack the name:

- Large — these models have billions of parameters (internal settings that were adjusted during training). GPT-4 is estimated to have over a trillion.

- Language — they work with text. Their training data is text. Their input is text. Their output is text. (Some newer models handle images and audio too, but language is the foundation.)

- Model — in AI, a "model" is the finished product of training. It's the system that takes an input and produces an output.

LLMs work by predicting what comes next in a sequence of text. When you type a question into ChatGPT, it's essentially asking itself: "Given everything I've learned from training data, what sequence of words is most likely to follow this input?" It does this one word (actually one "token") at a time, building up its response piece by piece.

This is remarkably effective. It produces text that reads as fluent, coherent, and often insightful. But it's also why LLMs sometimes produce confident nonsense — because the most statistically likely continuation of a sentence isn't always the factually correct one.

Other terms you'll encounter

Algorithm — A set of steps for solving a problem. In AI, people often use "algorithm" and "model" interchangeably, though technically an algorithm is the process and a model is the result.

Training — The process of showing a model data so it can learn patterns. Training a large language model takes weeks or months and costs millions of dollars in computing resources.

Parameters — The internal settings of a model that get adjusted during training. More parameters generally means a more capable (and more expensive) model.

Inference — When you actually use a trained model (as opposed to training it). Every time you send a message to ChatGPT, it's performing inference.

Natural Language Processing (NLP) — The branch of AI focused on human language. LLMs are the current state of the art in NLP.

Generative AI — AI that creates new content (text, images, audio, video) rather than just analysing existing content. ChatGPT is generative AI. So is DALL-E (images) and Suno (music).

Foundation model — A large model trained on broad data that can be adapted for many different tasks. GPT-4 and Claude are foundation models.

How these terms relate to each other

Think of it as a set of nesting boxes:

- AI (the biggest box) — software that performs tasks requiring human-like thinking

- Machine Learning (inside AI) — AI that learns from data

- Deep Learning (inside ML) — machine learning with many-layered neural networks

- Large Language Models (inside deep learning) — deep learning systems specifically trained on language

Not all AI uses machine learning. Not all machine learning is deep learning. Not all deep learning produces language models. But the language models you're most likely to use — ChatGPT, Claude, Gemini — sit at the centre of all those nested boxes.

Understanding this hierarchy means you can place any new term you encounter into context. When someone says "this uses deep learning," you now know roughly where it sits in the landscape.

Key Takeaways

- Machine learning is AI that learns from data rather than following hand-coded rules

- Deep learning is machine learning using neural networks with many layers — enabling complex pattern recognition

- Large language models (LLMs) are deep learning systems trained on text, powering tools like ChatGPT and Claude

- These terms nest inside each other: AI → Machine Learning → Deep Learning → LLMs

- You don't need to understand the maths — knowing what each term means and how they relate is enough

Practical Exercise

Build a jargon-busting glossary.

Create a personal glossary of AI terms using your own words. For each of the following, write a one-sentence definition in language you'd use to explain it to a friend:

- Artificial Intelligence

- Machine Learning

- Neural Network

- Deep Learning

- Large Language Model

- Generative AI

- Training

- Parameters

- Inference

Test your definitions: read them back. Would a friend with no AI background understand each one? If not, simplify further. The ability to explain these concepts plainly is a genuinely valuable skill — in workplaces, it immediately sets you apart.

Knowledge Check

1. What is the main difference between traditional programming and machine learning?

a) Machine learning is faster

b) Traditional programming uses data; machine learning uses rules

c) Machine learning learns patterns from data; traditional programming follows explicit rules

d) There is no meaningful difference

Answer: c) Traditional programming requires explicit rules written by humans. Machine learning discovers patterns by learning from data.

2. What does "large" refer to in "Large Language Model"?

a) The physical size of the computer running it

b) The number of parameters (internal settings) the model has

c) The size of the company that made it

d) The length of responses it can generate

Answer: b) "Large" refers to the number of parameters — often billions or trillions — that were adjusted during training.

3. How do the key AI terms relate to each other?

a) They're all different words for the same thing

b) AI contains machine learning, which contains deep learning, which contains LLMs

c) LLMs contain deep learning, which contains machine learning, which contains AI

d) They're all separate, unrelated technologies

Answer: b) They nest: AI is the broadest term, machine learning is a subset, deep learning is a subset of that, and LLMs are a specific application of deep learning.

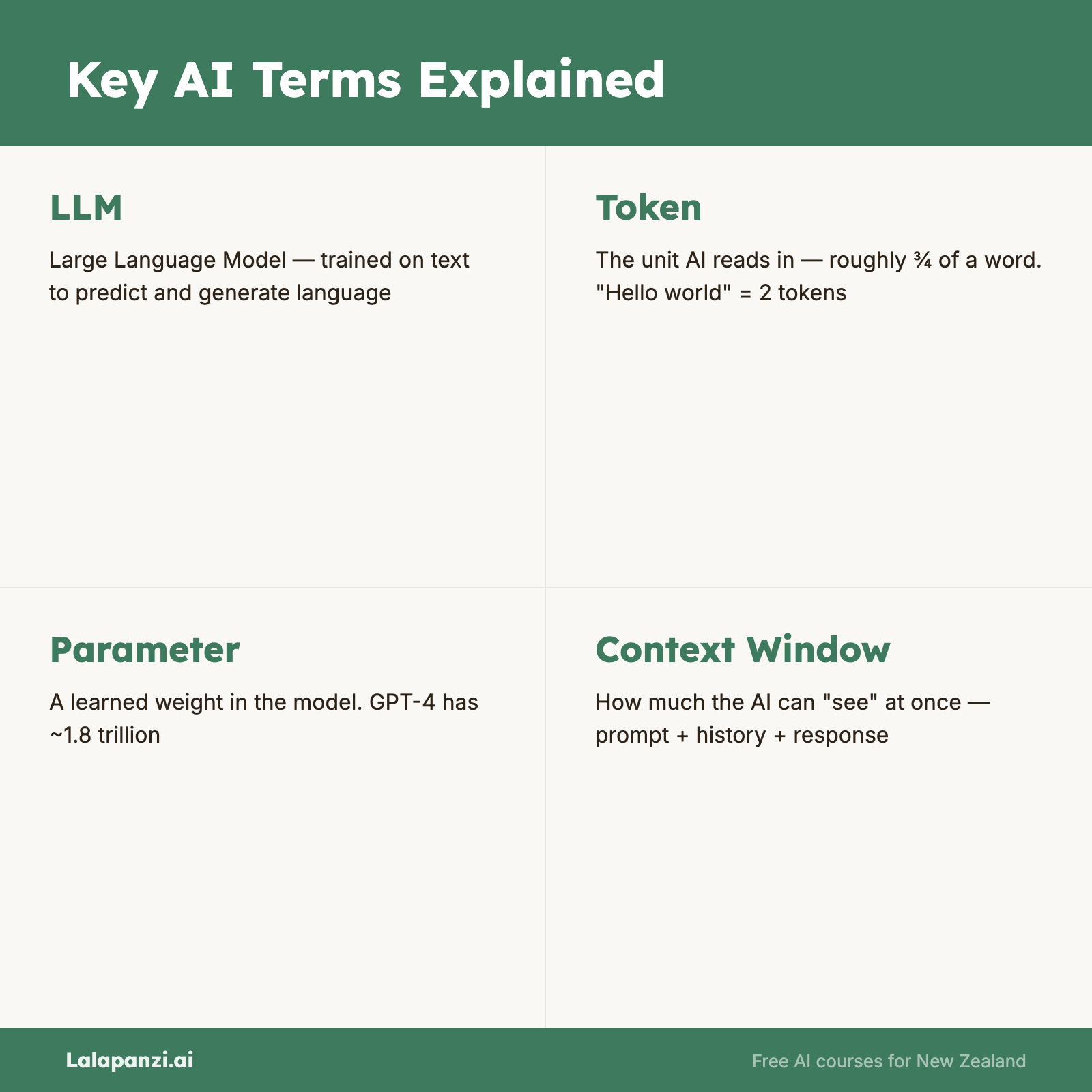

Visual overview